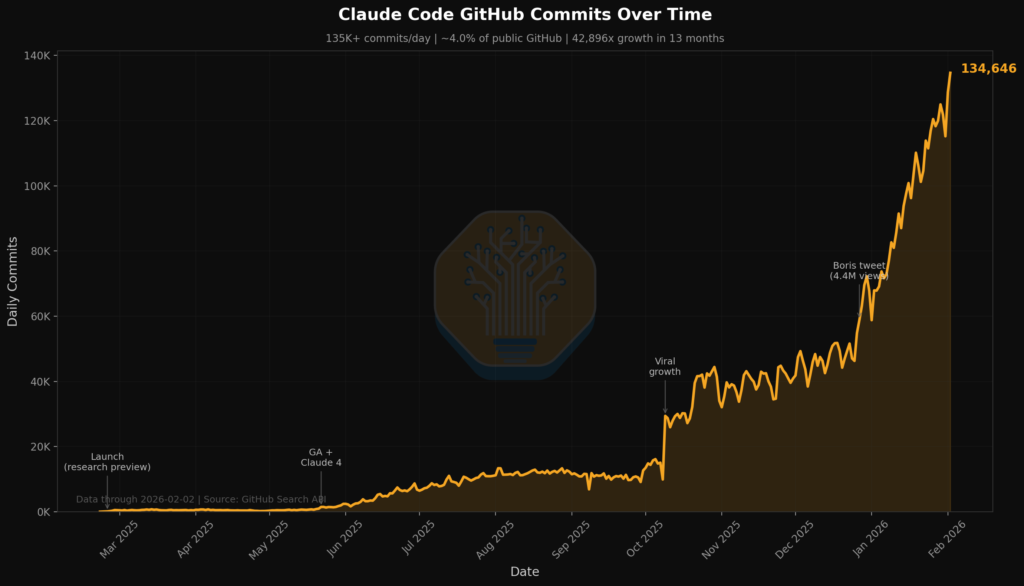

If you ask the AI coding tool Claude Code to commit source code directly to GitHub, it will by default add itself as an author with the commit message: “Co-Authored-By: Claude Opus”. Most people I work with do not trust Claude to commit anything at all by itself, and those who do trust it typically disable this commit message.

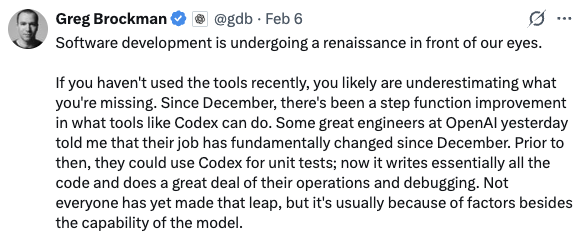

Now however, in February 2026, over 4% of all public GitHub commits contain this message. And the growth has been incredible. Greg Brockman, co-founder of OpenAI, said last week that “Since December, there’s been a step function improvement in what tools like Codex can do. Some great engineers at OpenAI yesterday told me that their job has fundamentally changed since December”. For me this change was GPT-5.2. It is the first AI model I have used that can consistently produce large volumes of very high quality source code. For others it was Claude Opus 4.5, for its amazing ability to solve almost any task given to it.

Source: Tokenomics Team, Github, Generated by Claude Code

At the current growth rate Claude Code will be responsible for over 20% of all public commits to GitHub by the end of this year. Add to that all AI commits done by humans, commits with the setting disabled, and commits done by OpenAI GPT, and you are potentially looking at around 30-50% of all public commits coming from an AI agent by the end of 2026.

If your organization use VSCode and GitHub Copilot, Microsoft just unleashed a massive agentic AI update last Wednesday. This means that all your VSCode developers now have access to state-of-the-art AI models like Opus 4.6 and GPT-5.2-high and can control them with an advanced multi-agent interface. Some of your developers will start to produce 2-5 times more code than others, and you need a strategy to manage it. If you are a CTO or CIO, my best recommendation for you is to just dive straight in and try to build something yourself with AI. Try Opus 4.6 and GPT-5.2-high. They are very different models, so try both and learn their strengths. Only by doing it yourself will you truly understand the power of these tools.

“Our CTO hasn’t slept in 36 hours because he’s been obsessively and single-handedly building massive new features with Claude Code’s Agent Teams. I genuinely think this might be the biggest paradigm shift in how fast you can build since Claude Code first came out last year.” Alistair McLeay, Grw.ai

Thank you for being a Tech Insights subscriber!

Listen to Tech Insights on Spotify: Tech Insights 2026 Week 7 on Spotify

- AI-Supported Mammography Reduces Missed Breast Cancers

- SpaceX Acquires xAI in $1.25 Trillion Merger

- OpenAI and Snowflake Sign $200 Million Partnership

- OpenAI Launches Codex Desktop App for Multi-Agent Development

- OpenAI Frontier: Enterprise AI Agent Platform with Embedded Engineering Services

- PaperBanana Automates Academic Illustration Generation with Multi-Agent Framework

- Anthropic Launches Claude Opus 4.6 with Enhanced Coding and 1M Token Context Window

- OpenAI Launches GPT-5.3-Codex Agentic Coding Model

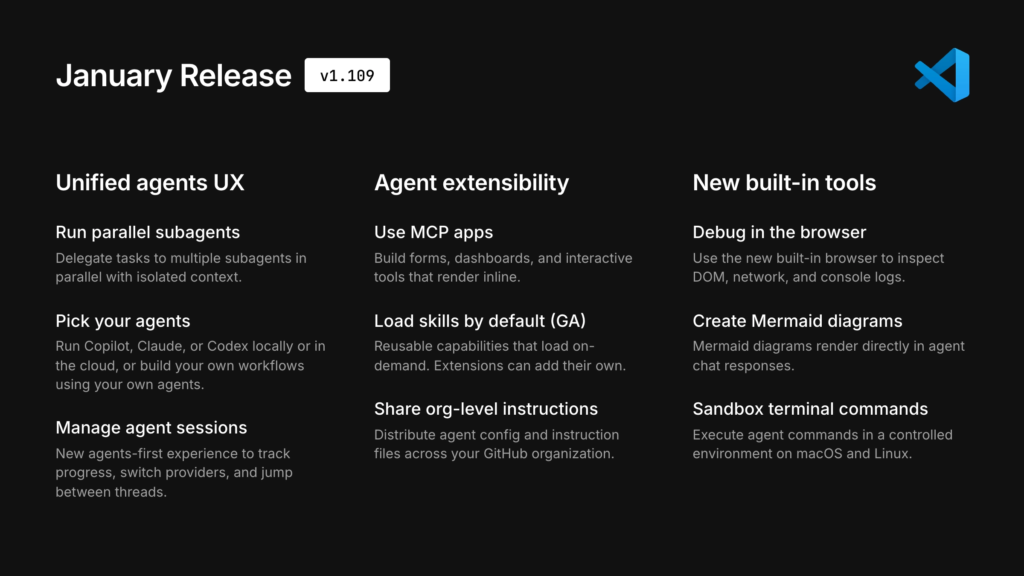

- Visual Studio Code 1.109 (January 2026) Brings Multi-Agent Development

- Apple Integrates Claude Agent and OpenAI Codex Into Xcode

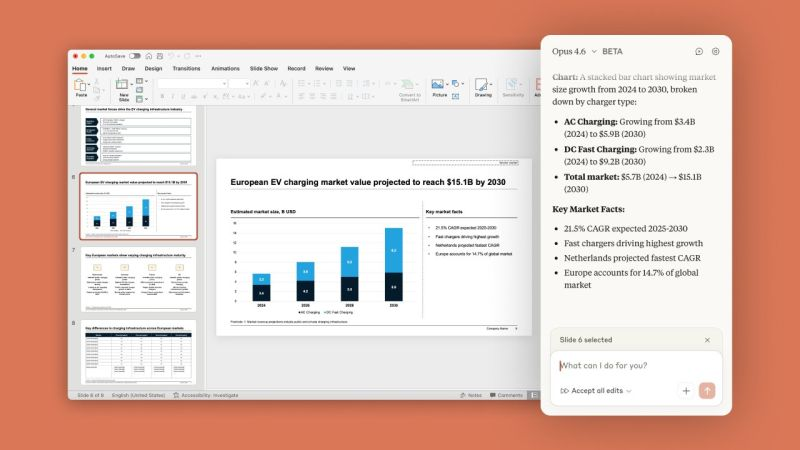

- Claude in PowerPoint Add-in Launches in Beta

- Kling AI Launches 3.0 Model Series with Multilingual Audio

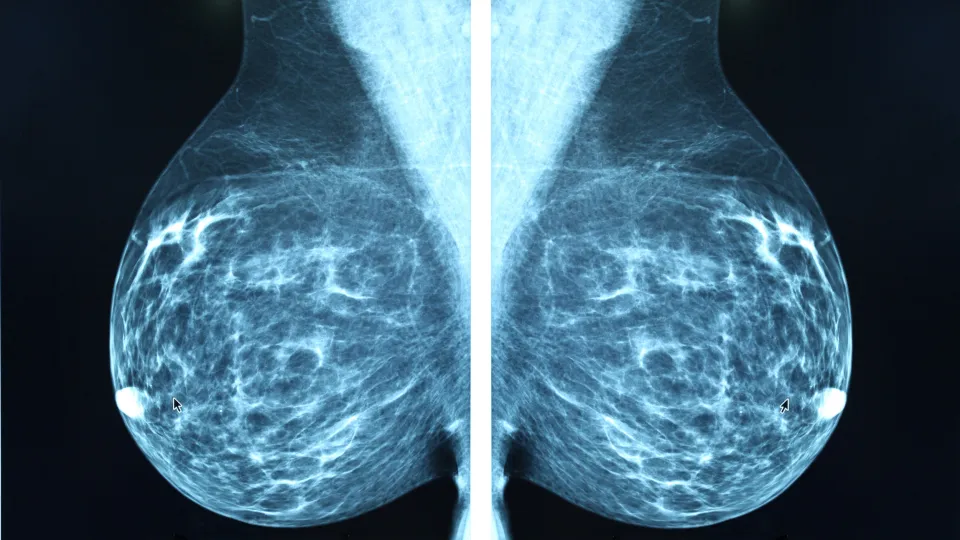

AI-Supported Mammography Reduces Missed Breast Cancers

https://www.thelancet.com/journals/lancet/article/PIIS0140-6736(25)02464-X/abstract

Published: January 31, 2026

The News:

- The MASAI trial enrolled 105,000 women in Sweden over two years to test whether AI-supported screening reduces interval cancers, which are tumors discovered between regular screening appointments.

- ScreenPoint Medical’s Transpara Detection system analyzed mammograms and triaged cases, flagging high-risk scans for radiologist review.

- Detection sensitivity increased from 73.8% with standard double reading to 80.5% with AI support, while specificity remained identical at 98.5%.

- The AI group showed 27% fewer aggressive non-luminal A subtype cancers, 21% fewer large tumors (T2+), and 16% fewer invasive cancers reaching interval diagnosis.

- Radiologist workload decreased by 44% as the AI system handled initial triage and low-risk case filtering.

My take: 44% less workload and 27% fewer aggressive cancers – the results from this study are truly remarkable, and while I understand the need to use two groups for research purposes to benchmark the results, it still feels like this was a missed opportunity for the non-AI group which during the time of this study got more tumors simply being put in the wrong trial group. Maybe we can just conclude that AI helps out a lot with both workload and cancer detection, skip further A/B trials, and focus on rolling out this technology to as many as possible as quickly as possible.

Read more:

SpaceX Acquires xAI in $1.25 Trillion Merger

The News:

- SpaceX acquired xAI, Elon Musk’s artificial intelligence venture, in a merger completed February 2, 2026, creating a combined entity valued at $1.25 trillion. The acquisition consolidates Musk’s businesses, including rocket manufacturing, Starlink satellite internet, the Grok AI chatbot, and the X social media platform.

- The merger structured as a share exchange values SpaceX at $1 trillion, up from $800 billion in December 2025, and xAI at $250 billion. The exchange ratio converts one xAI share into 0.1433 shares of SpaceX stock.

- Musk stated the primary motivation is developing “orbital data centers” by integrating AI capabilities with SpaceX’s satellite infrastructure. The merger positions SpaceX to launch data centers into space to support AI infrastructure demands.

- xAI was rapidly depleting cash reserves while competing with OpenAI and Anthropic in expanding AI infrastructure. Tesla invested $2 billion in xAI in January 2026, just weeks before the SpaceX merger announcement.

- SpaceX plans a public offering later in 2026, potentially raising $50 billion to fund the combined entity’s operations. This marks the largest merger transaction by value on record.

My take: “Orbital data centers”. What a great idea, why not build a few of those when our humanoid robots are on their way to colonize Mars while we cruise around in our fully-self driving cars. Two weeks ago xAI announced that their new data center consumes more power than the entire city of San Fransisco, and money was quickly running out. With SpaceX taking over the ownership xAI now have much more money available, and with SpaceX going public later in 2026 xAI will probably have all the cash they need to continue improving their AI solutions for years to come. Maybe one day we will get orbital data centers too, but I would be very surprised if xAI initiated anything like that in the next few years.

OpenAI and Snowflake Sign $200 Million Partnership

https://openai.com/index/snowflake-partnership

The News:

- OpenAI and Snowflake entered a multi-year, $200 million partnership on February 2, 2026 that integrates OpenAI models directly into Snowflake’s platform, eliminating third-party cloud intermediaries.

- The partnership gives Snowflake’s 12,600 enterprise customers access to GPT-5.2 through Snowflake Cortex AI and Snowflake Intelligence across AWS, Google Cloud, and Microsoft Azure.

- Customers can build AI agents and custom applications grounded in their enterprise data using OpenAI models within Snowflake’s governed platform.

- Snowflake Intelligence enables natural-language queries across business data without requiring users to write code.

- Snowflake Cortex AI Functions allow teams to call OpenAI models directly from SQL to analyze rows, columns, text, images, and audio.

- The partnership includes engineering collaboration between Snowflake and OpenAI teams to develop features leveraging OpenAI’s Apps SDK, AgentKit, and enterprise workflow APIs.

- Canva’s Head of Data Science noted that “leveraging OpenAI models in Snowflake Cortex AI can help us extend that foundation” while maintaining security without compromising performance.

My take: Snowflake previously offered OpenAI-integration through Microsoft Azure, and this is a major step towards “chatting with your warehouse”. If you are working with Snowflake this should be on the top of your priority list to explore in the coming weeks.

Read more:

OpenAI Launches Codex Desktop App for Multi-Agent Development

https://openai.com/index/introducing-the-codex-app

The News:

- OpenAI released the Codex app for macOS on February 2, 2026, a desktop interface that manages multiple coding agents simultaneously, runs parallel tasks, and handles long-running development work spanning hours to weeks.

- The app organizes agents in separate threads by project, includes built-in worktree support so multiple agents can work on the same repository without conflicts, and syncs with existing Codex CLI and IDE extension sessions.

- Skills extend Codex beyond code generation to tasks like implementing Figma designs with “1:1 visual parity”, managing Linear projects, deploying to Cloudflare/Netlify/Render/Vercel, and generating images via GPT Image. OpenAI maintains an open-source skills library.

- Automations run background tasks on schedules, landing results in a review queue. OpenAI uses these internally for daily issue triage, CI failure summaries, and release briefs.

- Codex includes two personality modes, terse and conversational, selectable via the /personality command across app, CLI, and IDE extension.

- OpenAI doubled rate limits across all paid tiers and temporarily added Codex to ChatGPT Free and Go plans. Usage doubled since GPT-5.2-Codex launched mid-December 2025, with over 1 million developers using Codex in the past month.

- The app uses native, open-source sandboxing that restricts agents to editing files in their working folder or branch and requires permission for elevated commands like network access.

My take: I have been testing the Codex app and all newly released AI models extensively the past week. And as for the Codex Desktop app, my recommendation for now is to avoid using it for one simple reason: it encourages vibe coding and AI slop. Let me explain why:

First, by default, the Codex app hides all code it generates while it’s working. You can enable it, which I recommend, but by default it’s off. This means that most users working with this app have no clue what’s going on, and cannot interrupt it halfway if it’s clearly going the wrong direction when writing the code. Of course if you cannot read code this does not matter to you, and I guess this is also why this setting was also disabled.

Second, the main issue I have with it, is that it encourages working with different parallel tasks on the same code base using something called “work trees”. This means that each agent works in its own “virtual directory” and is not affected by the work done by other agents. The main problem with this approach is that you cannot reset context in an agent session that is working in it’s own work tree. Let me explain why this should be a major showstopper for you.

Source code written by AI models is never production ready on the first try. Even the latest and greatest models like “GPT-5.3-CODEX extra high” does not produce perfect code in the first try. This means that your average process should look something like this: (1) Design/plan/iterate. (2) -reset context-. (3) Let the AI write code based on your plan. (4) -reset context- (5) Let the AI review code / discuss changes / ask it to fix issues. Then go back to step (3). The iteration 3-4-5 is something you typically do many times if you are working with a production system before you commit any code to your repo.

With OpenAI Codex, you cannot reset context in a chat session, which means you have to work like this: (1) Ask AI to write code, (2) Let AI write code, (3) Commit code to production. There is no reason to let the AI review the code it wrote if you cannot reset the context memory, it will just believe the code it just wrote is all good. Maybe OpenAI will fix this in the near future, but for now I treat OpenAI Codex for desktop like Lovable – an easy way for non-programmers to quickly get things done with some of the best AI models.

OpenAI Frontier: Enterprise AI Agent Platform with Embedded Engineering Services

https://openai.com/index/introducing-openai-frontier

The News:

- OpenAI Frontier is an end-to-end platform for building, deploying, and managing AI agents in enterprise environments, bundled with professional services from OpenAI engineers who embed directly within customer organizations. The platform addresses the gap between AI pilots and production deployments by combining technology with hands-on implementation support.

- Forward Deployed Engineers (FDEs) are full-stack software engineers employed by OpenAI who work onsite or remotely with customers for extended periods, owning the entire deployment from business process mapping through production launch. The team launched in early 2025 under Colin Jarvis and is projected to exceed 100 members by mid-2026.

- FDEs create a direct feedback loop between customer deployments and OpenAI Research, channeling real-world production limitations directly to model development teams. This bidirectional connection influences both platform improvements and future model evolution based on enterprise use cases.

- The platform connects siloed enterprise systems (CRM, data warehouses, ticketing tools) using standard protocols including Model Context Protocol, providing shared business context across agents. Built-in evaluation tracks agent performance over time with metrics for success rates, accuracy, and response times. Security includes SOC 2 Type II and ISO 27001/27017/27018/27701 compliance with individual agent identities and audit logging.

- Early customers include HP, Intuit, Oracle, State Farm, Thermo Fisher, and Uber. A hardware manufacturer reduced root-cause debugging from four hours to minutes, a major manufacturer compressed production optimization from six weeks to one day, and a global investment company freed up over 90% more time for salespeople.

- Frontier runs on OpenAI-managed infrastructure, not customer premises. OpenAI launched EU data residency in February 2025, allowing organizations to process all data within the European Economic Area and Switzerland for GDPR compliance. The service is “available today to a limited set of customers, with broader availability coming over the next few months”.

My take: Creating AI agents is difficult, and the more complex the models become and the more they can manage, the higher skills you need to integrate them into your business. I think this is why OpenAI launched this initiative, an in-house consultancy service to help large enterprise companies getting started with agentic development. That said, there are several specialist companies available with these skills outside the US that are not OpenAI, and my recommendation as always is that if you plan to roll out agentic AI within your organization, get external assistance from a company who has done this journey a few times before.

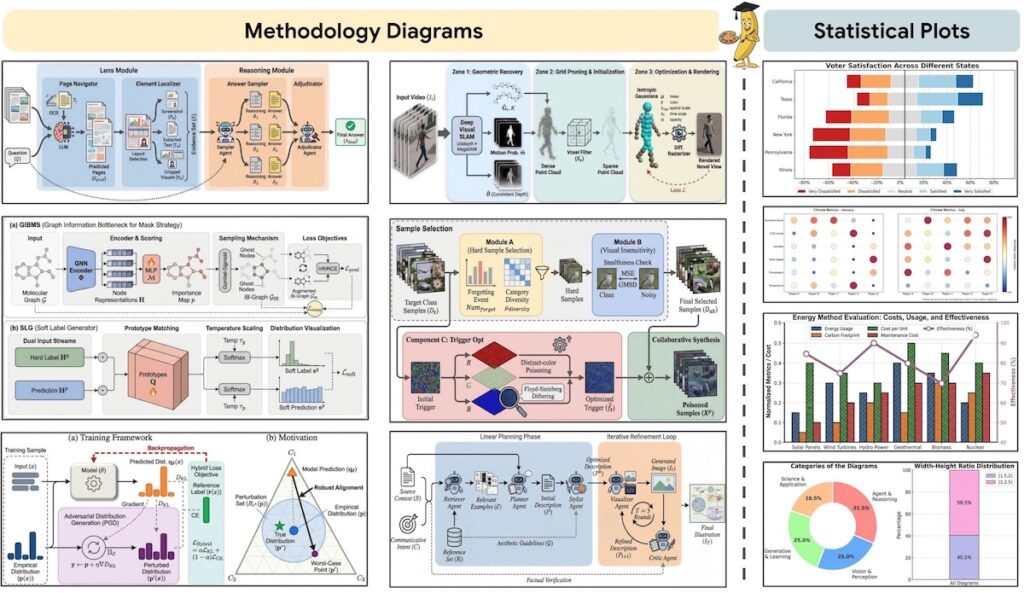

PaperBanana Automates Academic Illustration Generation with Multi-Agent Framework

https://arxiv.org/abs/2601.23265

Published: January 29, 2026

The News:

- PaperBanana addresses the manual bottleneck of creating academic illustrations through an agentic framework that generates publication-ready diagrams and statistical plots from scientific text.

- The system orchestrates five specialized agents: Retriever, Planner, Stylist, Visualizer, and Critic. These agents retrieve references, plan content and style, render images, and iteratively refine outputs through self-critique.

- PaperBananaBench, introduced alongside the framework, contains 292 test cases for methodology diagrams curated from NeurIPS 2025 publications. The benchmark includes an average source context length of 3,020.1 words and figure caption length of 70.4 words.

- Experiments show PaperBanana outperforms leading baselines across four dimensions: faithfulness, conciseness, readability, and aesthetics.

- The framework extends to statistical plot generation and can enhance aesthetics of existing human-drawn diagrams by applying auto-summarized style guidelines.

- Primary failure mode involves connection errors such as redundant connections and mismatched source-target nodes. The critic model often fails to identify these errors, suggesting limitations in the foundation model’s perception capabilities.

My take: This work is done by Google DeepMind, so let’s all hope that Paperbanana is released as a plugin to Gemini in the next few months. This is something most of us would use for all our presentations, and if it truly works as well as they say in the paper, this would change everything about how we make scientific presentations going forward.

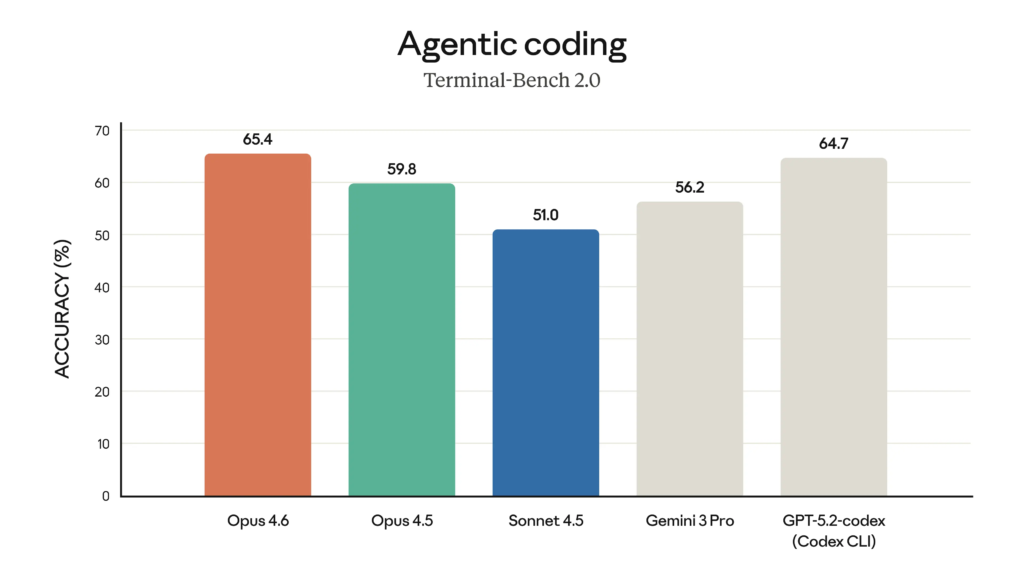

Anthropic Launches Claude Opus 4.6 with Enhanced Coding and 1M Token Context Window

https://www.anthropic.com/news/claude-opus-4-6

The News:

- Anthropic released Claude Opus 4.6 on February 4, 2026. The model targets coding tasks, agentic workflows, and long-context operations. Pricing remains $5/$25 per million input/output tokens.

- Opus 4.6 achieves 80.8% on Terminal-Bench 2.0 for agentic coding, outperforms GPT-5.2 by 144 Elo points on GDPval-AA economic tasks, and scores highest on Humanity’s Last Exam for multidisciplinary reasoning.

- The model introduces a 1M token context window in beta, a first for Anthropic’s Opus-class models. On the 8-needle MRCR v2 benchmark, it scores 76% compared to Sonnet 4.5’s 18.5%, reducing context degradation in long conversations.

- Opus 4.6 includes adaptive thinking that adjusts reasoning depth automatically, four effort levels (low, medium, high, max), context compaction to avoid hitting token limits, and 128K output token support.

- Anthropic deployed the model to find 500+ previously unknown high-severity vulnerabilities in open-source libraries including Ghostscript, OpenSC, and CGIF without task-specific tooling.

- Claude Code now supports agent teams in research preview, allowing multiple agents to work in parallel on independent tasks. Claude in Excel handles multi-step changes, and Claude in PowerPoint launched in research preview.

My take: Going by specifications alone this model looks amazing. It has best-in-class context retrieval, best-in-class performance on GDPVal, and ships with “adaptive thinking” with four different reasoning levels. However, I have used it extensively the past week for several complex programming tasks (the kinds of tasks you need at least 10 000 hours of coding experience to feel comfortable with), and my conclusion is that it’s slightly better than Opus 4.5 but still nowhere nere GPT-5.2 for complex reasoning chains.

GPT-5.2 “high” and “extra high” both feel like the biggest improvements in LLMs since GPT-3 was released in 2020. They solve programming tasks that previously could only be done by very senior engineers. Opus 4.6, just like Opus 4.5, still feels too unreliable, too quick to action, and too eager to trust in any kind of complex production system. Use it for CSS, UX, scripts, and scaffolding. But use GPT for doing the actual programming.

Read more:

OpenAI Launches GPT-5.3-Codex Agentic Coding Model

https://openai.com/index/introducing-gpt-5-3-codex

The News:

- OpenAI released GPT-5.3-Codex, an agentic coding model that handles long-running software development tasks, research, and computer operations. The model runs 25% faster than its predecessor and operates autonomously while allowing real-time steering without context loss.

- GPT-5.3-Codex scored 56.8% on SWE-Bench Pro (spanning four programming languages), 77.3% on Terminal-Bench 2.0 (terminal operations), and 64.7% on OSWorld-Verified (desktop productivity tasks). The model uses fewer tokens than prior versions on identical tasks.

- The Codex team used early versions of GPT-5.3-Codex to debug its own training, manage deployment, and diagnose test results. OpenAI researchers describe their workflow as “fundamentally different” from two months prior.

- GPT-5.3-Codex creates complex web applications from underspecified prompts, building games with multiple maps, items, and mechanics over millions of tokens. Examples include a racing game with eight maps and a diving game with oxygen and pressure mechanics.

- OpenAI classified GPT-5.3-Codex as “High capability” under its Preparedness Framework for cybersecurity tasks. The company launched a $10M API credit program for security research and a Trusted Access pilot for cyber defense work.

My take: Like with Opus 4.6, I have also tested OpenAI GPT-5.3-Codex extensively the past week. And my conclusions are like this: All “CODEX” model variants by OpenAI are all tuned for quick results. They also have a different system prompt that further emphasizes this. They are an excellent complement to the regular, slower, versions of GPT. But they do not replace them.

Ask GPT-5.3-CODEX-extra-high to do a large refactor in your code base, and it will do it quick and the code will run fine. It solves the task, but it will miss minor things that make the code more robust and reliable, such as handling special cases and adding internal robustness for incorrect future usage. In one project I keep all my text strings in language files, and two times the past day did GPT-5.3-CODEX forget about these files and hard-coded strings straight into the code. That has never happened even once with GPT-5.2. That said GPT-5.3-CODEX is quick, very quick, so use it for all not-so-complex and non-critical tasks but keep using GPT-5.2 for the heavy stuff until the regular 5.3 model is released hopefully later this week.

Visual Studio Code 1.109 (January 2026) Brings Multi-Agent Development

https://code.visualstudio.com/updates/v1_109

The News:

- VS Code 1.109 positions the editor as a unified platform for multi-agent development, with support for running multiple AI agents (GitHub Copilot, Claude, and Codex) concurrently across local, background, and cloud environments.

- The Plan agent now follows a four-phase workflow (discovery, alignment, design, refinement) and uses the new askQuestions tool to request clarifying information from users before generating implementation plans.

- Subagents now execute in parallel rather than sequentially, operating in isolated context windows to prevent context overflow in the main agent.

- Anthropic models include thinking token visualization, Messages API support with interleaved reasoning, and a configurable thinking budget.

- Agent Skills become generally available, providing specialized workflows and domain knowledge, with extensions able to contribute skills via the chatSkills package.json field.

- Custom agents support user-invokable and disable-model-invocation frontmatter attributes to control how agents are triggered and which subagents they can invoke.

- Terminal sandboxing (macOS and Linux only) restricts agent-executed commands to workspace directories and blocks network access by default, with auto-approval rules for safe operations like Set-Location, dir, and npm list.

- Copilot Memory (preview) stores user preferences across sessions via the memory tool (github.copilot.chat.copilotMemory.enabled).

- MCP Apps now render interactive visualizations, dashboards, and forms directly in chat responses using the renderMermaidDiagram tool.

My take: This is a MASSIVE release by Microsoft that will have implications for all companies working with software development. If your company uses VSCode and GitHub Copilot you need to be prepared for this release.

First of all. Microsoft has finally caught up with Codex and Claude Code when it comes to interacting with AI agents. You now have a solid plan mode, where the agent will ask you questions for clarifications, subagents that will work in parallel, agentic skills and MCP apps. What you still do not have in VSCode is the agentic-first mindset. Copilot is still a chat window in a regular code editor. This is not an AI-first environment. The way most people work with agentic engineering today is to use multiple chat instances with individual worktrees for each agent. This means your productivity scales with as many agents you can keep running at the same time. VSCode does not support this, and to make multiple chat windows work efficiently you basically have to launch multiple VSCode instances in parallel. So while these are great steps in the right direction for Microsoft, they are far from the leanness you get when working with Claude Code or Codex in 5+ terminal windows in different work trees.

For most developers new to agentic programming however, this is not an issue. It will take months before you get up to speed with prompting and reviewing so you can run 10+ AI instances in parallel and be productive with it. For companies that are exclusively using VSCode and GitHub Copilot, this means that you can now initiate 100% AI-prompted agentic development. Roll out OPUS 4.6 and GPT-5.2 to all your developers and enable the “HIGH” version of it. Allow each programmer up to 3 000 premium requests per month and encourage them to move complex tasks to the AI. My guess is that once most developers feel comfortable using prompted agentic programming, by then the UI of Microsoft VSCode will look completely different when used in a multi-agent setup.

Peter Steinberger, author of PSPDFKit and OpenClaw, comfortably working with 13 Codex instances running in parallel worktrees simultaneously.

Read more:

Apple Integrates Claude Agent and OpenAI Codex Into Xcode

The News:

- Apple released Xcode 26.3 with support for agentic coding tools, including Anthropic’s Claude Agent and OpenAI’s Codex, which run directly within Apple’s IDE.

- The agents access Apple’s developer documentation to reference current APIs and best practices during code generation.

- Agents can explore project structure, build projects, run tests, identify errors, and implement fixes autonomously.

- Xcode uses Model Context Protocol (MCP) to expose capabilities to agents, making it compatible with any MCP-compatible agent for project discovery, file management, previews, and documentation access.

- Developers select model versions through a dropdown menu (such as GPT-5.2-Codex vs. GPT-5.1 mini) and issue natural language commands through a prompt box.

- The system breaks tasks into smaller steps with visual highlighting of code changes and a project transcript showing the agent’s decision-making process.

- Xcode creates milestones at each agent change, allowing developers to revert code to any previous state.

My take: I linked to the article at TechCrunch since it just highlights how hard it can be to keep up with all these news. The article states that XCode 26.3 will “allow developers to use agentic tools, including Anthropic’s Claude Agent and OpenAI’s Codex, directly in Apple’s official app development suite”. This is of course not correct – users will be able to use the two models Anthropic Claude and OpenAI GPT-5.2-CODEX to create source code within XCode.

Much like the update to VSCode above, XCode is a monolith where AI is still an add-on. It will be interesting to see just how far Apple and Microsoft can take their current XCode and VSCode platforms when it comes to agentic AI. I believe the development interface of the future does not look anything like the traditional IDE, and maybe it would be best to keep XCode and VSCode as they are right now and just start over with something new in parallel instead of patching these old tools to do something they were not designed for from the start.

Claude in PowerPoint Add-in Launches in Beta

https://support.claude.com/en/articles/13521390-using-claude-in-powerpoint

The News:

- Anthropic released Claude in PowerPoint as a beta add-in on February 5, 2026, available to Max, Team, and Enterprise subscribers.

- The add-in reads slide masters, layouts, fonts, and color schemes to generate content that maintains template compliance.

- Users can generate full deck structures from natural language descriptions, edit specific slides without regenerating entire presentations, and convert bullet points into native PowerPoint charts and diagrams.

- The add-in offers two model options, Sonnet 4.5 and Opus 4.6, selectable within the PowerPoint interface.

- Anthropic documented prompt injection attack risks, noting that malicious instructions hidden in external templates could extract sensitive information, modify financial records, or perform destructive actions.

- Chat history does not persist between sessions, and Enterprise audit logs and Compliance API integration are not yet available.

My take: In my fairly limited tests this works quite well. Add Claude as a PowerPoint plugin, and it can generate full presentation decks for you. It’s not perfect, objects typically do not align well, text overflows etc, but it definitely gives you a good starting point if you’re starting from scratch or are in a rush.

Kling AI Launches 3.0 Model Series with Multilingual Audio

https://twitter.com/Kling_ai/status/2019064918960668819

The News:

- Kling AI released version 3.0 on February 5, 2026, introducing Video 3.0, Video 3.0 Omni, Image 3.0, and Image 3.0 Omni.

- The platform extends video generation to 15 seconds per clip, up from the 10-second limit in version 2.6.

- Native audio generation supports English, Chinese, Japanese, Korean, Spanish, and multiple regional accents including American, British, and Indian.

- The system handles multi-character dialogue scenes where each character speaks a different language with user-specified speaking order.

- Image models now output 2K and 4K ultra-high-definition content.

- Early access is available to Ultra subscribers with public release following soon.

My take: This is a very strong video generation model, and Curious Refuge’s R&D gives it an 8.1 / 10 rating as “the highest-scoring AI video model we’ve reviewed to date” in image-to-video workflows. If you have a minute, [check the Kling 3.0 launch video](Kling 3.0 example from the official blog post : r/singularity) – we have really come far when it comes to image-to-video generation over the past year!

Read more: