The main AI news of last week were the public announcements from Anthropic, OpenAI and the Department of War, where most people on social media seemed to summarize this as:

- The Department of War (DoW) wants to use AI models for “all lawful purposes”.

- Anthropic wants to add two restrictions: mass domestic surveillance and fully autonomous weapons should be excluded. The DoW declines.

- Trump posts on Truth Social that all federal agencies must “IMMEDIATELY CEASE” using Anthropic’s technology, with a limited six‑month transition window for the Pentagon and a few other agencies.

- Defense Secretary Hegseth calls Anthropic “a supply chain risk to national security”, warning that contractors who want to keep doing business with the DoW will have to sever all commercial ties with Anthropic.

- The day after the Department of War signs an agreement with OpenAI, where the contract allows for “all lawful purposes”.

- = Public outrage against OpenAI.

Artists like Katy Perry even publicly posted on X that she has left ChatGPT and signed up for an Anthropic Pro plan, and it has actually been difficult to navigate through social media in recent days due to all these “Vote with your wallet” posts. So, I spent a significant amount of time reading up on everything I could on this matter, and in this case it is clear that very little is known about what is actually going on behind the scenes here.

First off. Anthropic is a much smaller company than OpenAI. They have around 20-30 million monthly active users, while OpenAI has over 900 million weekly active users. When it comes to the Department of War (DoW), the way the models are deployed is different between Anthropic and OpenAI. When it comes to Anthropic, the models used by DoW are not hosted by Anthropic themselves, meaning they cannot maintain ongoing control over the safety stack once the models are deployed. Having a legally binding document saying that the models cannot be used for something related to fully autonomous weapons means that it is DoW’s responsibility to make sure no-one tries this, otherwise it might be significant legal actions involved. The legal discussions between DoW and Anthropic has been ongoing for months, and Axios reported two weeks ago already that the DoW might classify Anthropic as a supply chain risk if the discussions are stranded.

OpenAI on the other hand provides their own cloud hosting for the Department of War (DoW), which means that they can enforce these limits within their own cloud environment, using their safety stack and classifiers to monitor and restrict usage. OpenAI summarizes this in a formal post published on Saturday February 28:

We have three main red lines that guide our work with the DoW, which are generally shared by several other frontier labs:

- No use of OpenAI technology for mass domestic surveillance.

- No use of OpenAI technology to direct autonomous weapons systems.

- No use of OpenAI technology for high-stakes automated decisions (e.g. systems such as “social credit”).

And:

We think our red lines are more enforceable here because deployment is limited to cloud-only (not at the edge), keeps our safety stack working in the way we think is best, and keeps cleared OpenAI personnel in the loop. We don’t know why Anthropic could not reach this deal, and we hope that they and more labs will consider it.

The two key primary sources I recommend reading are:

- Statement from Dario Amodei on our discussions with the Department of War \ Anthropic

- Our agreement with the Department of War | OpenAI

My recommendation is that you read these two posts and build your own view of what happened before rushing to conclusions based on Internet influencers. There will continue to be lots of speculations and strong feelings about this topic the coming week, but when it comes to actual facts we only have these two articles for now. And maybe don’t rush to cancel your ChatGPT subscription just yet. OpenAI is very clear that they do not provide the DoW with a “guardrails off” or a non-safety trained model.

Thank you for being a Tech Insights subscriber!

Listen to Tech Insights on Spotify: Tech Insights 2026 Week 10 on Spotify

- AGENTS.md Files Hurt More Than They Help

- OpenAI Drops SWE-bench Verified

- Spotify Expands Prompted Playlist Beta to UK, Ireland, Australia, and Sweden

- Google Releases Nano Banana 2

- Perplexity Launches “Computer”

- 7 of 12 xAI Co-Founders Have Now Left the Company

- Anthropic Adds Remote Control to Claude Code

- Claude Code Gets Auto Memory, /simplify and /batch

- Anthropic Releases Responsible Scaling Policy Version 3.0

- OpenAI Adds WebSocket Mode to the Responses API

- Taalas HC1 Delivers 17,000 Tokens Per Second

- Inception Labs Releases Mercury 2

AGENTS.md Files Hurt More Than They Help

https://arxiv.org/abs/2602.11988

The News:

- ETH Zurich evaluated whether repository-level context files like AGENTS.md improve coding agent performance, as tools such as Claude Code, Cursor, and GitHub Copilot actively recommend them across 60,000+ open-source repositories.

- LLM-generated context files reduced task success rates in 5 of 8 settings, with performance drops of 0.5 to 2 percentage points on SWE-bench Lite and the new AGENTbench benchmark.

- All context file types increased inference costs by 20 to 23% and added 2.45 to 3.92 extra agent steps per task.

- Developer-written files showed ~4 percentage point improvement on AGENTbench, but only when existing repository documentation was absent.

My take: If you are developing software with AI then stop what you are doing and spend some time with this article. To summarize: Never let the LLM write the AGENTS.md file. Write it yourself and make sure it only contains repository-specific constraints that agents cannot discover independently. And keep it short. Putting in weak or irrelevant guidelines just make it harder for the AI agents to work in your repo.

Read more:

OpenAI Drops SWE-bench Verified

https://openai.com/index/why-we-no-longer-evaluate-swe-bench-verified

The News:

- OpenAI published a report on February 23, 2026, announcing it has stopped reporting scores on SWE-bench Verified, the benchmark it created in 2024 to measure AI performance on real-world software engineering tasks sourced from GitHub issues.

- An audit of 138 problems that OpenAI’s o3 model frequently failed found that 59.4% contain material test design flaws: 35.5% use narrow tests that reject functionally correct code by requiring specific implementation details not stated in the problem, and 18.8% test for additional functionality never described in the problem description.

- All three frontier models tested, GPT-5.2, Claude Opus 4.5, and Gemini 3 Flash, showed signs of training contamination. In controlled red-teaming sessions, the models reproduced exact gold-patch code, including specific variable names, function names, and even verbatim inline comments from diffs, despite this information not appearing in the problem prompt.

- Because SWE-bench Verified is built from public open-source repositories, OpenAI concludes that benchmark scores now reflect training-data exposure as much as genuine coding ability. Progress on Verified slowed to a 6-point gain (74.9% to 80.9%) over the last six months, a signal that saturation, not true capability limits, has been reached.

- OpenAI recommends SWE-bench Pro, developed by Scale AI, as an interim replacement, and is investing in privately authored benchmarks like GDPVal, where tasks are written by domain experts and graded holistically by human reviewers.

My take: If you have been following my newsletter you know my perspective on SWE-bench, which is that we should have stopped using it long ago. SWE-bench Verified was launched in August 2024, just after we got the first AI models that could write a few lines of code that actually worked, like GPT-4o and Sonnet 3.5. SWE-bench Verified has 500 tests where the median fix requires changing just 4 lines of code. And 161 of the 500 tests requires changing only 1-2 lines of code. This is how most of us used AI for programming up to around a year ago.

So in September 2025 OpenAI launched SWE-bench Pro. Where SWE-bench Verified requires changing on average 4 lines of code, SWE-bench Pro requires changing an average of 107 lines across 4.1 files. This is more representative of how we use AI agents today, but right now the way this test runs is not yet standardized – depending on the scaffolding and cost caps, model results can vary greatly. I am guessing OpenAI will present SWE-bench Pro results when they launch their next model, and probably also standardize how models should be configured when executing the test. Now if we could only get a test that measures code quality, duplication, performance and robustness I would be more than happy.

Read more:

- SWE-Bench Pro: Can AI Agents Solve Long-Horizon Software Engineering Tasks? | Scale AI

- SWE-Bench Pro (Public Dataset) | SEAL by Scale AI

Spotify Expands Prompted Playlist Beta to UK, Ireland, Australia, and Sweden

https://newsroom.spotify.com/2026-02-23/prompted-playlist-prompts-to-try

The News:

- Spotify’s Prompted Playlist feature, first tested in New Zealand in December 2025 and expanded to the US and Canada in January 2026, is now in beta for Premium listeners in the UK, Ireland, Australia, and Sweden.

- Users type a natural-language description in English to generate a playlist drawn from their personal listening history combined with real-time music and cultural trends.

- Each track in the generated playlist includes a one-line explanation of why it was included.

- Playlists can be refreshed on a daily or weekly schedule, and users can edit or replace the original prompt at any time.

- Example prompts from Spotify include requests like finding “one artist I haven’t listened to yet but would probably love” and building a playlist that gives “an overview of their catalog”, or generating a nostalgia mix of “British pop bangers from the ’00s.”

- Usage limits are in place during the beta phase and may change as Spotify gathers feedback.

My take: I really enjoyed this new feature. If you live in Sweden, UK, Ireland or Australia, just launch the Spotify app, go into Create and choose Prompted Playlist. Write your list prompt and set it to update the list daily or weekly. For me it worked really well.

Google Releases Nano Banana 2

https://blog.google/innovation-and-ai/technology/ai/nano-banana-2

The News:

- Nano Banana 2 (“Gemini 3.1 Flash Image”) is Google’s latest image generation and editing model, released February 26, 2026, combining the speed of the Flash tier with features previously exclusive to Nano Banana Pro.

- The model supports resolutions from 512px to 4K across multiple aspect ratios, maintains subject consistency for up to five characters and fidelity of up to 14 objects within a single workflow.

- It uses real-time web search grounding, pulling current images and information to render specific subjects more accurately and to support infographic and data visualization creation.

- Text rendering is improved, including in-image translation and localization across languages, making the feature applicable to marketing mockups and localized content.

- Nano Banana 2 is rolling out as the default image model across the Gemini app, Google Search (AI Mode and Lens), Flow, Google Ads, AI Studio, the Gemini API, and Vertex AI, expanding to 141 new countries and eight additional languages.

- SynthID watermarking is applied to all generated images, and C2PA Content Credentials verification is coming to the Gemini app soon. The SynthID verification feature in Gemini has been used over 20 million times since November 2025.

My take: Google launched it’s first “Nano Banana” image generator in August 2025, and then “Nano Banana Pro” launched in November coinciding with the launch of Gemini 3.0 Pro. Nano Banana Pro is still a better model than “Nano Banana 2” that launched last week (better on text and details), but it is costly. Thus as of this week “Nano Banana Pro” has been restricted to Gemini Pro / Ultra subscribers, so if you are using Nano Banana for anything commercial or depend on it in your workflows, then you probably need to start paying for a Pro subscription.

Read more:

Perplexity Launches “Computer”

https://www.perplexity.ai/hub/blog/introducing-perplexity-computer

The News:

- Perplexity Computer, launched February 25, 2026, is a cloud-based agentic platform that orchestrates 19 AI models to research, write code, design, deploy, and manage projects from a single prompt.

- The system uses Claude Opus 4.6 as its core reasoning engine, with Gemini for broad research, ChatGPT 5.2 for long-context tasks, Grok for lightweight tasks, and dedicated models for image and video generation.

- Tasks run in isolated cloud environments with integrations for Gmail, Slack, Notion, Calendar, and over 400 other apps; workflows can execute persistently for hours, days, or weeks without user intervention.

- Users can run multiple projects in parallel, assign specific models to subtasks, and set credit-based spending caps; the system includes persistent memory across sessions.

- Access is currently limited to Perplexity Max subscribers at $200/month, with 10,000 credits included monthly and a 20,000-credit early-adopter bonus; rollout to Pro and Enterprise tiers is planned.

My take: The closest products to Perplexity Computer are Manus and OpenAI Operator. What I really liked about Manus was their demo website where you could replay tasks and see how Manus handles them. Perplexity has done the same, so if you go to https://www.perplexity.ai/computer/live you can see how Perplexity Computer handles complex tasks like creating a Window 3.1 simulator. I have a Perplexity Pro account myself, and I will give this a good try once they roll it out to Pro/Enterprise tiers.

Read more:

- Perplexity Computer: The Good, The Bad, and The Ugly : r/ArtificialInteligence

- Perplexity Computer: What I Built in One Night (Review, Examples, and How It Compares to OpenClaw and Claude)

7 of 12 xAI Co-Founders Have Now Left the Company

The News:

- Toby Pohlen, one of xAI’s 12 co-founders, announced his departure on February 27, making him the seventh co-founder to leave the company in less than three years.

- Pohlen was the third co-founder to exit in February alone, following Tony Wu and Jimmy Ba, who left earlier in the month.

- Before leaving, Musk had put Pohlen in charge of Macrohard, a new xAI division focused on AI software run by digital agents, named as a reference to Microsoft.

- The departures follow xAI’s merger with SpaceX, which valued the combined entity at $1.25 trillion. SpaceX is now planning what is expected to be the largest IPO on record.

- Pohlen wrote on X: “Three years, thousands of PRs, and a million jokes. Today was my last day @xai”, and said his immediate plans were to sleep more than 8 hours and “think about what I want to do next”.

My take: On February 11 Elon musk said “As a company grows, especially as quickly as xAI, the structure must evolve just like any living organism. This unfortunately required parting ways with some people”. xAI is indeed evolving, and if I would pick three words to describe the direction of X it would be messy, mansplaining and immature. Elon Musk always knows best, and his weird sense of humor and ideology has a strong influence on xAI both as a company and their AI model “Grok”. Just do a quick Youtube search for Tesla unhinged mode and then consider the values of a company releasing something like this to the public.

Read more:

- Elon Musk suggests spate of xAI exits have been push, not pull | TechCrunch

- TESLA’S NEW GROK AI UPDATE… HOW IS THIS EVEN LEGAL?! WTF!!! – YouTube

Anthropic Adds Remote Control to Claude Code

The News:

- Anthropic released Remote Control for Claude Code on February 24-25, 2026, currently in research preview for Claude Max subscribers ($100-$200/month), with Pro ($20/month) access coming later.

- Remote Control acts as a synchronization layer between a local terminal session and the Claude mobile app or web browser, accessed via the claude rc command or by scanning a QR code in the terminal.

- Sessions run entirely on the user’s local machine, not in Anthropic’s cloud, meaning local MCP servers, tools, file systems, and project configuration remain available.

- Traffic routes through the Anthropic API over TLS using short-lived, scoped credentials. The local agent makes outbound HTTPS requests only and does not open inbound ports.

- Current limitations include: only one remote connection per session is supported, the terminal must stay open, and network outages exceeding roughly 10 minutes will terminate the session and require a restart.

- API keys are not supported; Team and Enterprise plans are excluded from the research preview.

My take: What’s worse than an agentic AI with full control over your computer? An AI agent that’s remote-controlled with full control of your computer where you as user cannot see what’s going on. Anthropic is really going all-in with their vibe-coding approach, where you can produce code when you’re in the bathroom or walk your dog (their idea, not mine). Maybe we will be working like this in a few years time, but if you try it now just be aware that you are building up a technical debt that you need to deal with sooner than later. Today’s AI models are simply not able to produce code that’s consistently good enough without detailed supervision.

Read more:

Claude Code Gets Auto Memory, /simplify and /batch

https://code.claude.com/docs/en/memory

The News:

- Claude Code v2.1.59 introduced auto memory, a feature where Claude writes and maintains its own project notes in a MEMORY.md file, persisting context across sessions without any manual setup.

- Auto memory is enabled by default and captures project patterns, debugging insights, and architectural decisions that Claude discovers during sessions.

- The feature stores notes in a structured directory: a main MEMORY.md entry file plus optional topic-specific files such as debugging.md or api-conventions.md.

- Auto memory is distinct from the manually maintained CLAUDE.md file; when the two conflict, instructions from CLAUDE.md take precedence.

- The next version of Claude Code also adds two new Skills: /simplify and /batch.

- /simplify runs parallel agents to improve code quality, tune code efficiency, and check CLAUDE.md compliance. Intended usage: run it after a code change, e.g., “hey claude make this code change then run /simplify”.

- /batch plans code migrations interactively, then executes them in parallel using dozens of agents. Each agent runs in full isolation via git worktrees, runs tests, and opens a pull request. Example usage: “/batch migrate src/ from Solid to React”.

My take: My main two problems with Claude Code and Claude Opus are that very often Claude Opus forgets things in the CLAUDE.md rules file and often ignores surrounding code to understand context, structure and coding style. The main reason for this is that Opus is pretty bad at using it’s full context memory, which is why tools like Claude Code tries everything it can to not fill up the context memory with irrelevant information.

Anthropic are aware of this, but instead of fixing it at the core (improving context memory performance and letting Claude Code use more of the context window), they patch around it with things like “auto memory” and the new /simplify command. The auto memory feature here is particularly messy where it will read the first 200 lines from MEMORY.md and if the file expands beyond that it will move things over to two other files, debugging.md and api-conventions.md. This just increases your work load since you need to not only manage CLAUDE.md but now also these three files. And as we know from the AGENTS.md study reported previously in this newsletter, you need to do this work by hand and prune these files from old and irrelevant information continuously. To me this feels like a hack trying to work around the context memory limitations of CLAUDE through four separate rules files.

Everyone who have worked with Claude Opus knows it is not very good at writing short and efficient code, so now Anthropic suggests we run “/simplify” after everything Opus have created in an attempt to clean things up. The development team of Claude Code is clearly moving much faster than the model team here. This is one of the things you really want the model to handle by itself – first write the code, then always go through the code in fresh context window reviewing it for issues, performance optimizations and code duplication. Like the auto memory feature, this new /simplify command also feels like a temporary hack before this functionality is built-in to the model itself.

Read more:

- Anthropic Just Added Auto-Memory to Claude Code (I Tested It) | by Joe Njenga | Feb, 2026 | Medium

- Boris Cherny on X: “In the next version of Claude Code.. We’re introducing two new Skills: /simplify and /batch.” / X

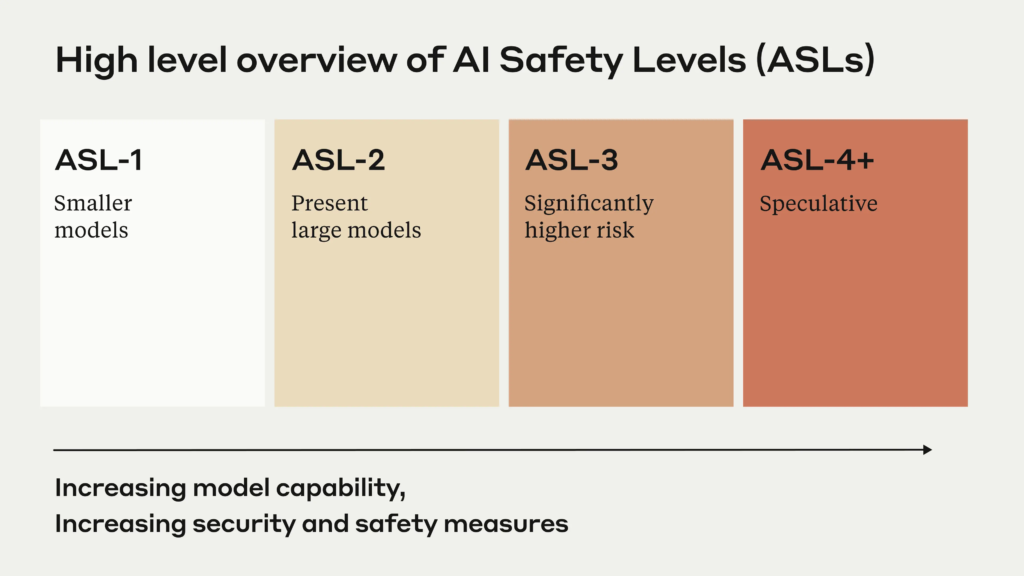

Anthropic Releases Responsible Scaling Policy Version 3.0

https://www.anthropic.com/news/responsible-scaling-policy-v3

The News:

- Anthropic published the third version of its Responsible Scaling Policy (RSP) on February 24, 2026, a voluntary framework for managing catastrophic risks from advanced AI systems.

- The original RSP (2023) used a conditional “if-then” structure: if a model exceeded defined capability thresholds (such as biological weapons assistance), then specific safeguards must be activated. RSP v3 restructures this by replacing hard commitments with publicly declared, non-binding goals called a “Frontier Safety Roadmap.”

- The new policy separates Anthropic’s own planned mitigations from broader recommendations it believes the entire AI industry should adopt, acknowledging that some higher-level safeguards are not achievable by a single company. A RAND report cited in the policy notes that its top-tier model weight security standard is “currently not possible” without national security community assistance.

- Risk Reports will now be published every 3-6 months, detailing model capabilities, threat models, and active mitigations. External expert reviewers will audit these reports under specific circumstances, with pilots already underway.

- Anthropic activated ASL-3 safeguards in May 2025, primarily targeting chemical and biological weapons misuse by threat actors with limited expertise, and considers that implementation a success.

My take: The main change with RSP v3 is that it replaces a hard pause on model deployment if capability thresholds were breached with a more flexible roadmap, reducing the risk of sudden service interruption. Since the original RSP was introduced in 2023 model performance has grown exponentially, and we are at the point where it’s the getting very hard to draw a line on when a model is “too good” so it should be stopped before rolling it out.

Read more:

OpenAI Adds WebSocket Mode to the Responses API

https://developers.openai.com/api/docs/guides/websocket-mode

The News:

- OpenAI added WebSocket mode to the Responses API, targeting agentic workflows with many sequential tool calls, announced February 23, 2026.

- Instead of re-sending full context with each HTTP request, clients maintain a persistent connection to wss://api.openai.com/v1/responses and send only incremental inputs plus a previous_response_id on each turn.

- For workflows with 20 or more tool calls, OpenAI reports up to approximately 40% faster end-to-end execution compared to standard HTTP mode.

- Clients can pre-warm connection state by sending response.create with generate: false, preparing tools and instructions before the first generation turn without consuming model output.

- The mode is compatible with Zero Data Retention (ZDR) and store-false, since prior-response state is held in a connection-local in-memory cache rather than written to disk.

- Single connections are capped at 60 minutes; after that, clients must open a new connection and either chain from a persisted response ID or restart with full context.

My take: Cline posted some interesting statistics on X: 15% faster performance on simple tasks, and 39% faster performance on complex multi-file workflows. [This is already in use by Codex CLI](Codex changelog), so congratulations to all GPT-API-users out there, everything should be much quicker as of this week.

Read more:

- Cline on X: “We tested @OpenAI’s new WebSocket connection mode for the Responses API into Cline and the early numbers are wild. ” / X

- Codex changelog

Taalas HC1 Delivers 17,000 Tokens Per Second

https://kaitchup.substack.com/p/taalas-hc1-absurdly-fast-per-user

The News:

- Taalas, a 24-person startup, has launched HC1, an ASIC that hardwires Meta’s Llama 3.1 8B weights directly into silicon, delivering approximately 17,000 tokens per second per user.

- The chip uses TSMC’s 6nm process, measures 815 mm², contains 53 billion transistors, and fits as a PCIe card drawing ~250W, with up to 10 cards per standard air-cooled rack.

- Inference is priced at $0.0075 per 1M tokens for Llama 3.1 8B.

- Base model weights are fixed at fabrication; the chip supports configurable context windows and LoRA adapters stored in on-chip SRAM.

- Weights are quantized at 3-6 bits, which Taalas acknowledges degrades output quality relative to full-precision models.

- Taalas raised $169 million alongside the launch, with a second model targeting Q2 2026 and a next-generation HC2 platform planned for later in 2026.

My take: As with diffusion-based token generators (see the news about Mercury 2 below), it’s hard to predict how this technology will scale going forward. The best model they could fit on the chip today was a heavily quantized version of Llama 3.1 8B. Taalas HC1 is an interesting tech demo, and apparently enough of a demo to grant them $169 million. That said I wouldn’t bet my house on that they are able to bring a large 32B q8 model running on their chips within a year, and it’s not even clear if their platform scales well with model complexity.

Inception Labs Releases Mercury 2

https://www.inceptionlabs.ai/blog/introducing-mercury-2

The News:

- Inception Labs released Mercury 2 on February 23, 2026, a reasoning language model that uses diffusion-based parallel token generation instead of the autoregressive, token-by-token method used by GPT, Claude, and Gemini.

- Mercury 2 generates 1,009 tokens per second on NVIDIA Blackwell GPUs, with an end-to-end latency of approximately 1.7 seconds, compared to around 5 seconds for Claude Haiku 4.5 and 22.8 seconds for GPT-5 Mini.

- The model scores 91.1 on AIME 2025, 73.6 on GPQA Diamond, and 67.3 on LiveCodeBench, placing it in the same quality tier as Claude 4.5 Haiku and GPT 5.2 Mini rather than frontier reasoning models.

- Pricing is set at $0.25 per million input tokens and $0.75 per million output tokens, and the API is OpenAI-compatible.

- The model supports 128K context, native tool use, schema-aligned JSON output, and tunable reasoning depth.

- Skyvern CTO Suchintan Singh stated: “Mercury 2 is at least twice as fast as GPT-5.2, which is a game changer for us”.

My take: Mercury 2 does not perform anywhere near current SOTA models on benchmarks, but it’s extremely fast. Diffusion-based token generators are interesting, but it’s still way too early to determine if they will stay competitive in the next few years.

Read more: