After nearly five years of development and over $80 billion of investments, Meta is closing down the Metaverse on June 15. Metaverse was a constant race for a technology that never arrived: no-one felt comfortable standing up for hours with a big heavy device strapped on their face, so Meta began to design services for a future device that would be as light as glasses and could be used anytime and everywhere, with nearly unlimited battery and at an affordable price. The possibilities are endless when you design for something that doesn’t yet exist.

In many ways I feel the same way with many areas in AI. The true value of a platform like OpenClaw comes from a future where AI agents can work as your own personal assistant, something you trust with your personal details and your sensitive data. We are not there yet, and while many believe we will be there very soon (why would NVIDIA otherwise bet on NemoClaw), we don’t yet know if we will arrive there or when. We might get there some day, but it’s one thing to have an AI creating documents on your computer, to having an AI interacting autonomously with other people on your behalf.

It’s the same with local AI models, like Nemotron that is shipped with NemoClaw. Every new small model is better than the previous small model, but they are all inferior to large state-of-the-art models like Claude and GPT. Yet still many companies build their strategy around these smaller local models, in the hopes that they some day soon will get capable enough to meet the requirements of automating human tasks in the organization. Local models are fun to use, just be aware that this might be another Metaverse. Local models in a size that you can run on a reasonable hardware setup (below 300b parameters) might never reach the point where they actually become usable for most tasks. Like the Metaverse it’s not just one issue to solve here, it’s about data size, token usage, GPU usage, context window precision and hallucination rates, all at once. Test-time-compute, our latest scaling factor, just made it even more difficult to run local models efficiently.

So how do you avoid betting on the next Metaverse? Begin by using models that can solve real tasks today, like Gemini 3.1, GPT-5.4 and Opus 4.6. Then use them in a scaffolding with a proven track record, like Agno, LangGraph or the Microsoft Agent Framework. Use them to automate repetitive tasks, the top models excel at that. Then when the models become better, expand use cases to less-repetitive tasks. More nuanced tasks.

Then maybe in the future we will discover some new architecture that will make small local models insanely powerful, and we will solve the security issues and prompt injection risks with large LLMs to make them reliable as personal agents, but until that happens maybe don’t focus your main AI strategy around those two areas specifically, despite Jensen Huang saying ”This is definitely the next ChatGPT”.

Thank you for being a Tech Insights subscriber!

Listen to Tech Insights on Spotify: Tech Insights 2026 Week 13 on Spotify

Notable model releases last week:

- GPT-5.4 Mini and GPT-5.4 Nano. Designed for high-volume workloads.

- Mistral Leanstral. The first open-source code agent for Lean 4.

- Mistral Small 4. Hybrid model for chat, coding, agentic tasks, and reasoning.

- NVIDIA Nemotron 3 Nano 4B. Optimized for on-device deployment.

- Xiaomi MiMo-V2-Pro. 1T Foundation model for real-world agentic workloads.

THIS WEEK’S NEWS:

- Mistral Forge: Train Enterprise AI Models on Proprietary Data

- What 81,000 People Want From AI – And Why Job Fear Dominates in Wealthy Nations

- Claude Code adds Telegram and Discord control for running agent sessions

- Claude Cowork Gets “Dispatch”: Assign Desktop AI Tasks From Your Phone

- OpenAI to Acquire Astral, Integrating uv, Ruff, and ty into Codex

- Cursor Releases Composer 2

- Nvidia NemoClaw: An Enterprise-Grade Security Layer for OpenClaw Agents

- Google Stitch Gets a Major Update With AI-Native Canvas and Voice Design

- Google AI Studio Gets Full-Stack Vibe Coding With Antigravity Agent and Firebase

- Lovable Expands Beyond App Building to General-Purpose Work Platform

- Midjourney V8 Alpha Opens for Community Testing

- Microsoft Releases MAI-Image-2 Text-to-Image Model

- Perplexity Launches Perplexity Health

Mistral Forge: Train Enterprise AI Models on Proprietary Data

The News:

- Mistral launched Forge on March 17, a platform that lets enterprises train AI models from scratch on their own internal data, including codebases, compliance policies, operational records, and institutional documentation.

- Forge supports three stages of model development: pre-training on large internal datasets, post-training for task-specific refinement, and reinforcement learning to align model behavior with internal policies and operational objectives.

- The platform supports both dense and mixture-of-experts (MoE) model architectures, as well as multimodal inputs (text, images, and other formats), giving organizations options to balance performance against compute costs.

- Forge includes an agent-aware layer: Mistral’s Vibe agent can autonomously handle hyperparameter tuning, job scheduling, synthetic data generation, and benchmark monitoring during the training process.

- Early customers include ASML, Ericsson, the European Space Agency, DSO National Laboratories Singapore, and Home Team Science and Technology Agency Singapore.

- Enterprises retain ownership of trained models and underlying data; Mistral positions Forge as infrastructure that operates within a company’s own environment, with no cloud lock-in.

“Instead of relying on broad, public data, organizations can train models that understand their internal context embedded within systems, workflows, and policies, aligning AI with their unique operations.”

My take: This is one of the one of the most significant announcements for us in the EU in a long time. Mistral Forge is a managed end-to-end platform to train custom LLMs, and there are currently no other managed end-to-end solutions like it in the EU. While you can use Azure AI Foundry to fine-tune and deploy existing foundation models, only Mistral Forge lets you create these models from the ground up, including pre-training, post-training, reinforcement learning and evaluation.

For me as the CEO of an AI consultancy company, this is great news. Many companies want to train custom models but the work required to set up the process typically puts a hard limit on the ambitions. Training models in the US or China is often not an option. So thank you Mistral for this, I hope to use it soon.

What 81,000 People Want From AI – And Why Job Fear Dominates in Wealthy Nations

https://www.anthropic.com/features/81k-interviews

The News:

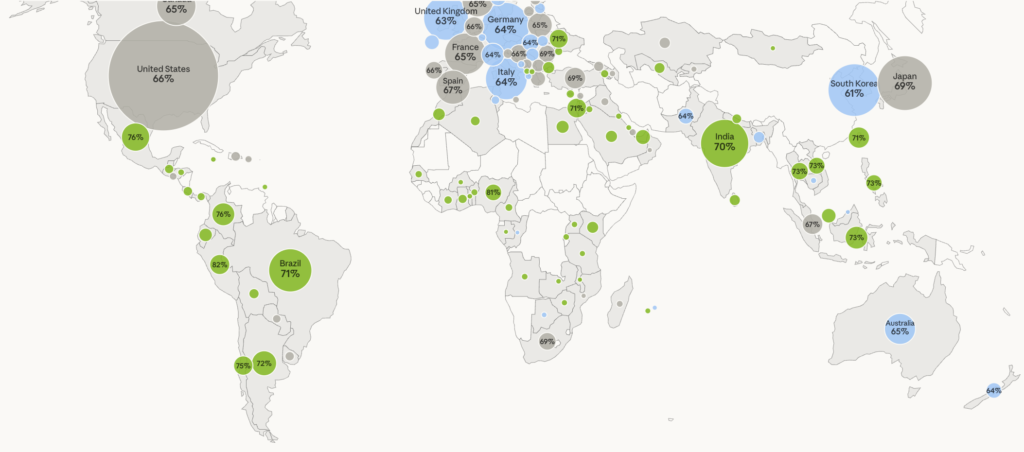

- Anthropic published findings from 80,508 structured interviews conducted in December 2025, spanning 159 countries and 70 languages, making it the largest qualitative AI study on record.

- The study used “Anthropic Interviewer,” a version of Claude configured to conduct adaptive conversational interviews, bridging the typical tradeoff between depth and scale in qualitative research.

- The top aspiration (18.8%) was “professional excellence,” with people wanting AI to handle routine tasks so they can focus on higher-value work; a healthcare worker in the U.S. said: “Since implementing AI, the pressure of documentation has been lifted. I have more patience with nurses, more time to explain things to family members.”

- 81% of respondents said AI had already taken at least one step toward their stated vision; the leading delivery area was productivity (32%), including one software engineer who reported cutting a 173-day process down to 3 days.

- Concern about unreliability ranked first (26.7%), followed closely by job and economic concerns (22.3%) and loss of human autonomy (21.9%); respondents voiced 2.3 distinct concerns on average.

My take: This study differs from other AI surveys not just in scale but in format. Where most prior AI research relies on static questionnaires, letting Claude do the actual “interviewing” meant Anthropic could use the study to probe why people wanted what they want, revealing for example that one of the main driver for productivity was deeper wishes for more family time and personal freedom.

The most striking pattern in the data is the geographic split in overall AI sentiment. You can see that in the chart above. Developed countries are the most negative against AI, primarily due to concern about people losing their jobs. Many people posted quotes like “At my old job, they replaced me as a writer with an AI” and “I got laid off from my job in May because my company wanted to replace me with an AI system”.

I found this interview fascinating because it highlights a paradox. People love AI because it allows them more personal time and freedom. Yet they fear AI of that same reason, being replaced because their work can be now be automated. My personal reflection here is that the better the models become the higher the fear of them will be in developed countries, especially for white collar workers whose work can soon be fully automated. And this is something you as an organization must consider when evangelizing all the possibilities with AI.

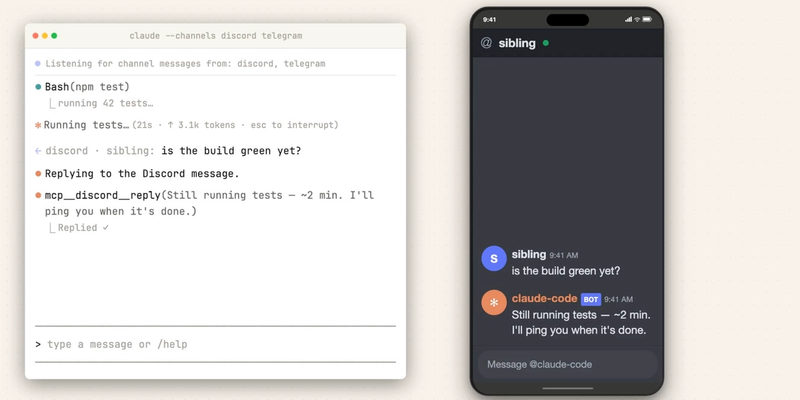

Claude Code adds Telegram and Discord control for running agent sessions

https://code.claude.com/docs/en/channels

The News:

- Anthropic released Claude Code Channels on March 20 as a research preview in Claude Code. It lets external services push events into an already-running local Claude Code session via MCP server plugins, so Claude can act on them without a human at the terminal.

- Telegram and Discord are the two supported platforms at launch, both implemented as installable based plugins from an Anthropic-maintained plugin registry.

- Messages arrive in the session as structured notifications/claude/channel MCP events. Claude reads them, executes work against local files and tools, and replies back through the same chat platform. The terminal shows only the tool call and a confirmation such as “sent”, while the full reply appears in the messaging app.

- Incoming messages are gated by a sender allowlist bootstrapped through a pairing code. Anyone not on the allowlist is silently dropped. On Team and Enterprise plans, an admin must explicitly enable the channelsEnabled setting before any user can activate channels.

- Events only arrive while the Claude Code session is open. For unattended use, the session must run in a background process or persistent terminal. Skipping permission prompts requires the –dangerously-skip-permissions flag.

My take: So before you run to test this, know that you need to enable –dangerously-skip-permissions in Claude Code for it to run unattended. This also means Claude Code can write, delete, and execute any file on your machine without asking. I mean it could do it before as well by creating a script or program, but you would still have to approve running that program.

Anthropic is moving at record pace here, adding features to Claude Code at an astonishing rate. Sometimes however I just wished they slowed down just a little bit, for example I do not think anyone should run Claude Code outside a sandbox environment. Running it like this in the terminal with the user’s access rights and opening it up for remote control through Discord or Telegram is just an accident waiting to happen. Not everyone will run this on a dedicated machine that is properly isolated from the rest of the local network.

Read more:

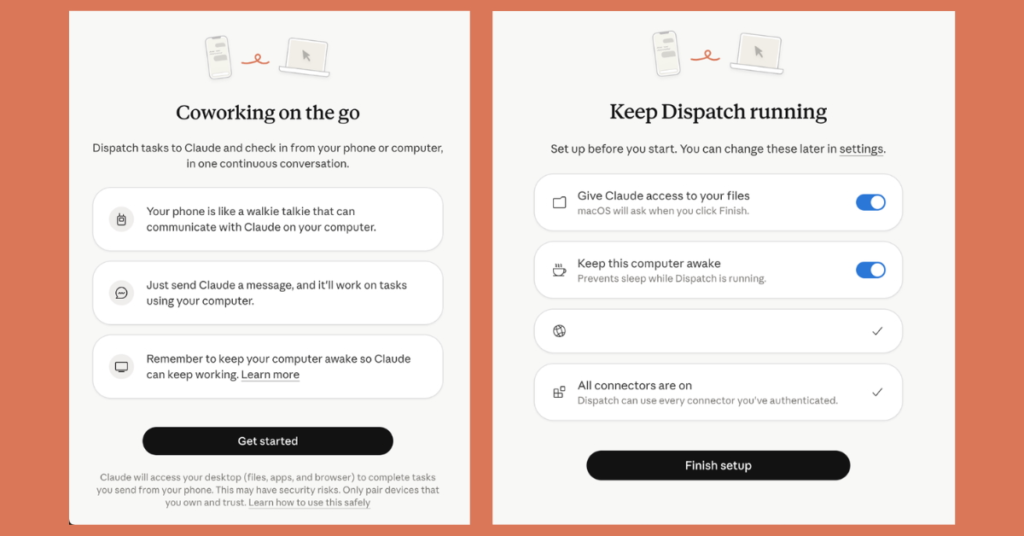

Claude Cowork Gets “Dispatch”: Assign Desktop AI Tasks From Your Phone

https://support.claude.com/en/articles/13947068-assign-tasks-to-claude-from-anywhere-in-cowork

The News:

- Anthropic added a feature called Dispatch to Claude Cowork, currently in research preview, that lets users assign tasks from a mobile device and have Claude execute them on a desktop computer using locally configured connectors, plugins, and file access.

- Unlike standard mobile chat sessions, Dispatch maintains a single persistent conversation thread that retains context across all interactions, accessible from both phone and desktop without resetting.

- From a phone, users can instruct Claude to pull data from local spreadsheets, search Slack messages and email, draft documents, build presentations from Google Drive files, or organize folders on the desktop.

- Claude delivers completed outputs, such as a compiled report or formatted file, as a message when done, rather than streaming intermediate steps.

- The feature requires the latest Claude Desktop app (macOS or Windows x64) and latest Claude mobile app, both online simultaneously. The desktop must remain awake and the app open during task execution.

- Current limitations include no push notifications when tasks finish, no ability to start multiple threads, and no support for scheduling tasks within Dispatch.

My take: How often are you not at your computer and feel that you want an agent to do work on your computer? I mean, Claude is generally quite quick and effective, so it’s not like you have to sit and wait around for a long time when you are working with it. Many media sites has compared this to OpenClaw, saying this is Anthropic’s answer to that. But this is nothing like OpenClaw. This is just a remote controlled sandboxed environment that can access some of your files. It’s still Claude Cowork, this is not OpenClaw.

Read more:

OpenAI to Acquire Astral, Integrating uv, Ruff, and ty into Codex

https://openai.com/index/openai-to-acquire-astral

The News:

- Last week OpenAI announced an agreement to acquire Astral, the company behind the Python developer tools uv, Ruff, and ty, with the Astral team joining the Codex division after regulatory approval.

- uv is a dependency and environment manager for Python, downloaded more than 126 million times in the month prior to the announcement, making it one of the most widely adopted Python tools since its February 2024 release.

- Ruff is a Python linter and formatter; ty is a Python type checker. Both tools give AI coding agents fast, in-workflow mechanisms to verify code quality.

- Codex has reached over 2 million weekly active users, with a 3x increase in users and a 5x increase in usage since the start of 2026.

- Financial terms were not disclosed; the acquisition is subject to standard regulatory approval, and both companies remain independent until closing.

“The Codex CLI is a Rust application, and Astral have some of the best Rust engineers in the industry” Simon Willison

My take: This sounds like an easy win for OpenAI. They get a strong set of tools for AI agents to produce higher quality code output, and they get some of the best Rust programmers in the industry. Anthropic is going the JavaScript route, where they acquired Bun last December, makers of the popular JavaScript runtime. I personally think Rust is the way to go for these types of tools, but I know things looked differently just a year ago. If given the choice today I’m not that sure Anthropic would have picked JavaScript for Claude Code.

Read more:

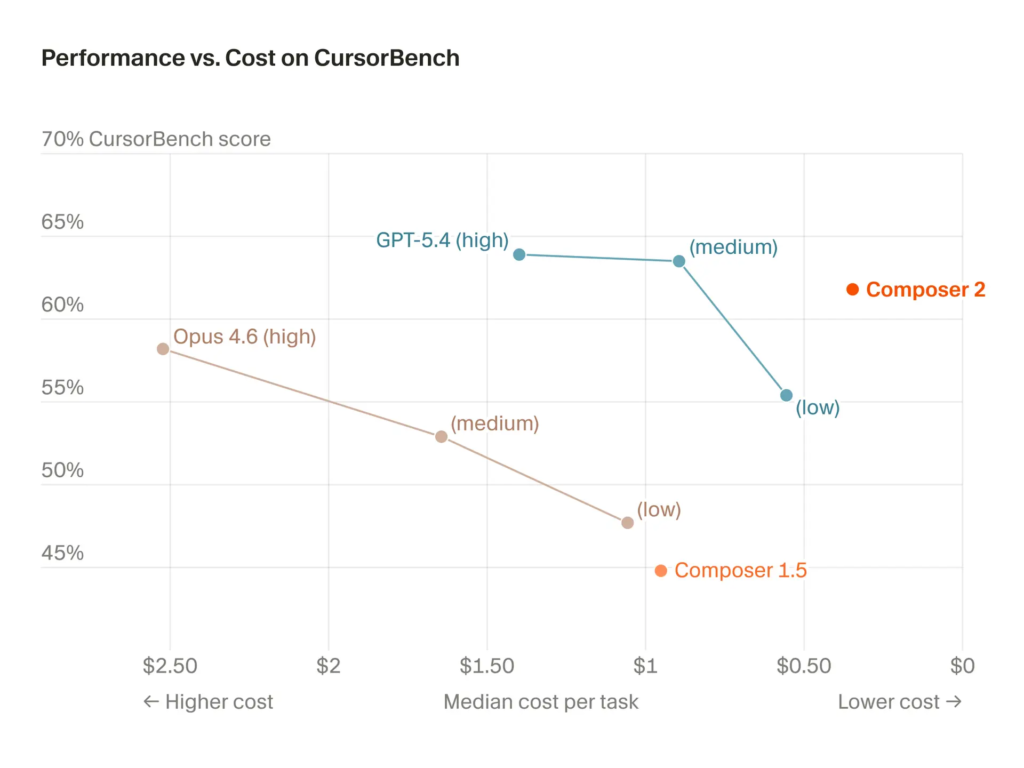

Cursor Releases Composer 2

https://cursor.com/blog/composer-2

The News:

- Cursor released Composer 2 on March 18, the third generation of its proprietary coding model, now available inside the Cursor IDE and in early alpha of a new standalone interface.

- Composer 2 scores 61.3 on CursorBench, 61.7 on Terminal-Bench 2.0, and 73.7 on SWE-bench Multilingual, up from 44.2, 47.9, and 65.9 respectively for Composer 1.5.

- The model uses a continued pretraining run as its base, with reinforcement learning on long-horizon coding tasks, and can complete tasks requiring hundreds of sequential actions.

- Two pricing tiers are available: Standard at $0.50/M input and $2.50/M output tokens, and Fast (the default) at $1.50/M input and $7.50/M output tokens.

- The model has a 200,000-token context window and is tuned for tool use, file edits, and terminal operations inside the editor.

“Composer 2 is Cursor’s own agentic model. It reaches frontier-level coding performance”

My take: The launch of Composer 2 caused quite a stir last week. It started on March 19 (last Thursday) when the X user @fynnso posted that Composer 2 is just the Chinese model Kimi 2.5 with some additional reinforcement learning. Just briefly after that two employees at Moonshot AI, the company behind Kimi 2.5 posted “I can’t recall that Cursor has come to us for a license 🤔”, and “We are shocked that @cursor_ai did not respect our license nor did they pay us any fees! @mntruell why??”.

It turned out that Composer 2 actually is the Chinese AI model Kimi 2.5, but it was reinforcement trained and is hosted by Fireworks AI. And it turns out that Fireworks AI do indeed have a license with Moonshot AI that their employees were not aware of. Moonshot AI later posted “Cursor accesses Kimi-k2.5 via @FireworksAI_HQ hosted RL and inference platform as part of an authorized commercial partnership.”

So, what happened here, really? It’s clear that Cursor wanted Composer 2 to look like they had developed their own proprietary LLM. Their documentation says: “Composer 2 is Cursor’s own agentic model”. By using Fireworks AI as hosting source, and letting them do the licensing agreement with Moonshot AI, they could also ignore the Kimi 2.5 licensing requirement that requires visible attribution when using the model. Cursor themselves didn’t have to mention anything other than it was “their own agentic model”. Then someone reverse-engineered the communications protocol and found Kimi in the model description. If they had just remembered to rename the model internally I am quite sure everyone would still believe Composer 2 was custom-built by Cursor.

So, is Composer 2 any good? For small bits of code I think it works fine, I mean it’s an enhanced version of Kimi 2.5. But don’t expect anything even remotely close to GPT-5.4 in coding performance.

Read more:

- Fynn on X: “Composer 2 is just Kimi K2.5 with RL at least rename the model ID” / X

- Lin Qiao on X: “🔥 Cursor Composer2 launched on Fireworks 🔥 This time it’s not just inference but also RL powered by @FireworksAI_HQ.” / X

- Kimi.ai on X: “Congrats to the @cursor_ai team on the launch of Composer 2! We are proud to see Kimi-k2.5 provide the foundation.” / X

- Composer 2 | Cursor Docs

Nvidia NemoClaw: An Enterprise-Grade Security Layer for OpenClaw Agents

https://nvidianews.nvidia.com/news/nvidia-announces-nemoclaw

The News:

- Nvidia announced NemoClaw, an open-source stack built on top of OpenClaw that adds security, privacy, and governance controls for deploying always-on autonomous AI agents in enterprise environments.

- NemoClaw installs in a single command, deploying the NVIDIA OpenShell runtime and Nemotron open models, providing an isolated sandbox that enforces policy-based security, network, and privacy guardrails around autonomous agents.

- The stack is hardware agnostic and runs on standard GeForce RTX PCs, RTX PRO workstations, DGX Station (748 GB coherent memory, up to 20 petaflops AI compute), and DGX Spark systems clustered up to four units.

- A privacy router lets agents choose between locally running open models and cloud-hosted frontier models, while staying within defined permission boundaries.

- Nvidia developed NemoClaw in collaboration with OpenClaw creator Peter Steinberger, who stated: “We’re building the claws and guardrails that let anyone create powerful, secure AI assistants”.

- Nvidia describes NemoClaw as an early-stage alpha release, noting: “Expect rough edges. We are building toward production-ready sandbox orchestration, but the starting point is getting your own environment up and running”.

My take: “This is definitely the next ChatGPT”, this is how Jensen Huang positioned NemoClaw, as AI shifts from answering questions to taking action. And with NemoClaw, Nvidia is building guardrails like privacy protections, oversight tools, and enterprise-grade security to ensure these agents can be “deployed safely at scale”. Now why would Nvidia want to invest millions of dollars into an open source project like this? I believe Nvidia wants to own not only the hardware layer but the operating layer too. This is what Apple and Google are doing, and it is also what Meta was trying to achieve with Metaverse before it was cancelled. It will give them direct access to the consumers without going through middle-companies.

Read more:

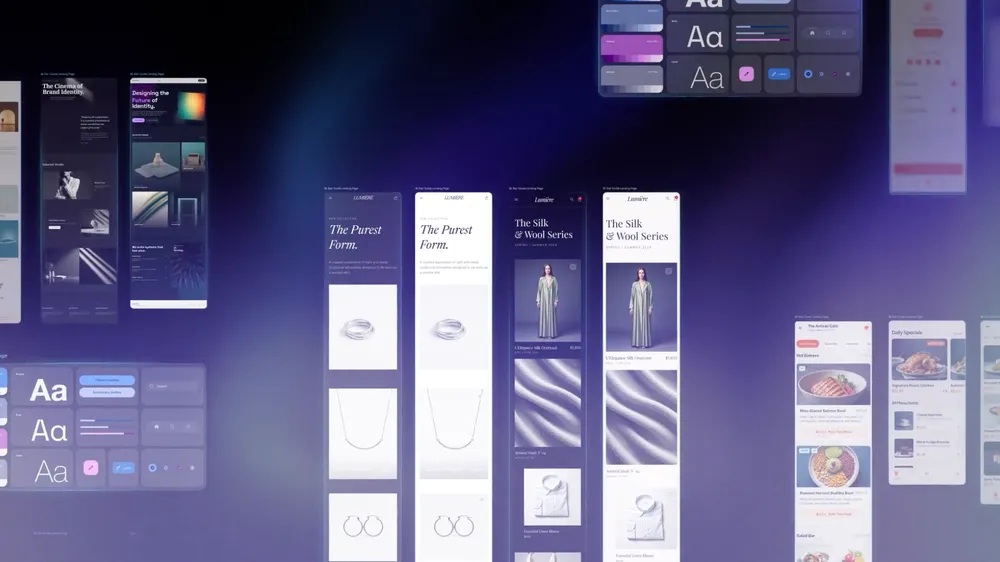

Google Stitch Gets a Major Update With AI-Native Canvas and Voice Design

https://blog.google/innovation-and-ai/models-and-research/google-labs/stitch-ai-ui-design

The News:

- Google Labs updated Stitch, an AI-powered UI design tool, from a single-screen generator into a full AI-native design canvas that takes natural language, images, or code as input and outputs high-fidelity UI designs.

- The redesigned canvas is infinite and supports parallel exploration via an Agent Manager, which tracks multiple design directions simultaneously within a single project.

- Stitch now generates up to five screens simultaneously, compared to one at a time in the original version.

- A new DESIGN.md file format, compatible with AI coding tools, lets users export or import design system rules across projects and tools such as Claude Code, Cursor, and Gemini CLI via MCP integration.

- Voice input lets users issue real-time design instructions directly to the canvas, such as requesting different color palettes or menu variants while reviewing a design.

- Designs export to Figma format, React code, and developer tools including AI Studio, with the Stitch MCP server and SDK available for further integration.

- Stitch remains free, with 350 design generations per month on a Gmail account.

My take: Vibe-design the UX in Stitch, then export it straight to AI Studio and vibe-build a complete full stack application. Stitch is not a competitor to tools like Figma, but rather a quick and easy brainstorming tool to explore different design directions. Google seems to be going in every possible direction now with their AI tools to see what sticks, and looking back in history that strategy typically tends to make it hard for anything to be really successful.

Read more:

- Google just dropped Stitch… and it might actually threaten Figma : r/FigmaDesign

- Google Stitch: A Product Designer’s Review

Google AI Studio Gets Full-Stack Vibe Coding With Antigravity Agent and Firebase

The News:

- Google AI Studio now includes a full-stack coding experience powered by the Antigravity coding agent, letting users build and deploy web apps from natural language prompts without leaving the browser.

- The agent proactively detects when an app requires a database or login and, after user approval, provisions Cloud Firestore for storage and Firebase Authentication for Google-based sign-in.

- Framework support now includes React, Angular, and Next.js, selectable via an updated Settings panel.

- The agent automatically installs third-party libraries such as Framer Motion and Shadcn when it determines they are needed for animations or UI components.

- Developers can supply API credentials for external services such as Google Maps or payment processors, stored in a new Secrets Manager under the Settings tab.

- Google states the platform has been used internally to build “hundreds of thousands of apps” over the past few months, and plans future integrations with Google Workspace (Drive and Sheets) and one-click deployment to Google Antigravity.

My take: AI Studio is now a real competitor to Lovable, Bolt.new and Replit – but why did it take them so long? The reasons are many. First, they lacked a good agentic development environment, but after they bought Windsurf last year and turned it into “Google Antigravity” that solved that problem. Secondly, Gemini 2.5 and 3.0 were not very good at writing code. Gemini 3.1 launched last month is still behind the competition, but it’s getting closer. For vibe coding I think it will do just fine. In many ways Google AI Studio launched last week is the first “1.0” release from Google for full-stack vibe coding. It starts here, and it will only get better.

I think the main challenge going forward for Google is branding and awareness. Almost everyone knows about Lovable, very few know about AI Studio. Google will have to work intensely on the marketing here to get people to choose it instead of Lovable, but if there is any company that has the chance to do it I think it’s Google – as long as they manage to keep the focus long enough.

Lovable Expands Beyond App Building to General-Purpose Work Platform

https://lovable.dev/blog/go-beyond-building-full-stack-apps-with-lovable

The News:

- Lovable, the AI-powered full-stack app builder, announced on March 19 that its platform now handles data analysis, document generation, image and video creation, and file processing, in addition to building web applications.

- The platform’s AI agent can now install tools, execute Python scripts, convert file formats, and validate its own output within a secure sandbox environment, rather than only generating text.

- Supported file inputs and outputs include PowerPoint, Word, PDF, CSV, Excel, JSON, XML, images (JPEG, PNG, GIF, WebP), and video files.

- Lovable can connect to third-party tools such as Slack, Amplitude, and Granola to pull data into its workflows. For example, users can ask it to pull a month of Slack feedback, run sentiment analysis, and produce a ranked feature request list, all within one conversation.

- Document generation use cases include pitch decks, invoices, marketing reports, and changelogs, with exports available in PDF, PowerPoint, Excel, and Word formats.

My take: As I wrote last week: “Having agents run freely within a controlled environment is what every AI company is pursuing” and this week it’s Lovable joining in. I can understand why Lovable wants to explore this route, but it’s not an easy one. Compare this with something like Claude Cowork or Copilot Cowork where you get unlimited access to this functionality, with Lovable you only get 100 requests every month for $50 as a business user. If you need more you need to pay $.50 per request. And that will get very expensive quite fast.

Lovable needs to be more than just a web site builder in order to stay relevant when companies like Google are launching their own full stack generators like AI Studio above. I just don’t think this is the right business offer, at least not at this price point. This is the challenge of driving an AI startup company today – when the time to create new products goes to zero you constantly need to evolve your offering since it’s getting easier by the month for your competitors to catch up.

Midjourney V8 Alpha Opens for Community Testing

https://updates.midjourney.com/v8-alpha

The News:

- Midjourney released an alpha version of its V8 image generation model on March 17, available at alpha.midjourney.com for community testing before a full rollout.

- Image generation runs approximately 5x faster than previous versions, according to the official announcement.

- A new –hd flag renders images natively at 2K resolution, and –q 4 adds an extra coherence pass for complex generations.

- Text in images renders more accurately when the target text is placed inside quotation marks in the prompt.

- The web interface includes a new Grid Mode for focused batch viewing, a conversation flow mode, and settings moved into sidebars.

My take: If you want to see what Midjourney v8 can do, just head over to Christopher Fryant @cfryant on X. Midjourney is still the best visual AI tool for aesthetics, and V8 is a little bit better in most aspects. It still has slight issues with anatomy, so you still need to keep an extra eye out for it in the generated images.

Read more:

Microsoft Releases MAI-Image-2 Text-to-Image Model

https://microsoft.ai/news/introducing-MAI-Image-2

- The News:

- Microsoft released MAI-Image-2, a second-generation text-to-image model built in collaboration with photographers, designers, and visual storytellers, now ranked #3 on the Arena.ai global leaderboard.

- The model targets photorealism, with particular attention to natural lighting, skin tone accuracy, and environments with contextual detail; Microsoft positions this as a reduction in post-production correction time for professional users.

- MAI-Image-2 generates readable text within images, covering use cases such as infographics, presentation slides, posters, and diagrams, addressing a long-standing failure mode in generative image models.

- The model supports complex and cinematic scene composition, including surreal or highly detailed visual concepts.

- Access is available now via the MAI Playground, with rollout ongoing to Copilot and Bing Image Creator; API access is live for select enterprise customers such as WPP, with broader developer access via Microsoft Foundry coming soon.

My take: Compared to their first text-to-image model MAI-Image-1 launched in November last year, MAI-Image-2 promises better in-image text generation and “enhanced photo realism”. Their web page however has mostly abstract images posted, so it’s very hard to predict how this model will work for every day image tasks. It’s also not available in the EU yet, and if you try it now you get an error “This region isn’t supported”. MAI-Image-1 was not very good at generating images, and this model started its training in January. I think Microsoft needs to up their game significantly if want to compete with Google Nano Banana Pro in this area.

Read more:

Perplexity Launches Perplexity Health

https://www.perplexity.ai/hub/blog/introducing-perplexity-health

The News:

- Perplexity Health is a set of connectors that links personal health data to Perplexity’s AI, letting users query their own medical records, lab results, and wearable data in one place.

- At launch, supported sources include Apple Health, Fitbit, Ultrahuman, Withings, Clue, and electronic health records from over 1.7 million care providers via partners b.well and Terra API; Oura and Function are expected soon.

- Answers draw from peer-reviewed journals and clinical guidelines, with each response linking directly to cited source material.

- Perplexity Computer integration lets users generate doctor-visit prep summaries, custom training protocols based on fitness history, or nutrition plans by running AI agents across connected data sources.

- Health data is encrypted in transit and at rest, never used to train AI models, and never sold to third parties; users can disconnect any source or delete data at any time.

- The feature rolls out first to Pro and Max subscribers in the US via iOS and web; a Health Advisory Board of physicians and researchers will review product decisions and clinical safeguards.

My take: Last week we got Copilot Health, and two months ago Anthropic launched Claude for Healthcare and OpenAI launched ChatGPT Health. This is clearly an area no-one wants to miss out on, and everyone is racing to become the number one AI tool for health history analysis. Google will launch “Health Connect” for FitBit in April, and Amazon launches Health AI agent on Amazon website and app with free 24/7 access to virtual care for Prime members. Living in the EU I can only hope we soon get some good statistics of the benefits of these systems, this might push our decision makers to loosen up the current regulations a bit.