Last week Google launched what most LinkedIn experts and AI newsletters summarized as one of the biggest technical leaps in years. The Rundown AI wrote: “Google Research introduced TurboQuant, an algorithm that compresses AI model memory over 6x without any retraining”.

And LinkedIn AI (with 13,800 followers) wrote:

“Google just made AI models smaller. Without killing performance.

Most progress in AI has focused on bigger models.

- More parameters.

- More compute.

- More cost.

TurboQuant flips that direction.

- Smaller models.

- Lower cost.

- Faster deployment.

The implication is big:

AI does not just scale up.

It also scales down.

Which means:

- More AI running locally

- Lower infrastructure costs

- Wider access beyond large tech companies

The next phase of AI is not just bigger. It is more efficient.”

This sounds amazing, right? It would be amazing, if it was true.

If everyone could just slow down just a tiny bit and actually read the full news before posting, they would have found out that TurboQuant only operates on what is called the KV cache, which is the memory used for chats. TurboQuant has nothing to do with model weights and model size. It does not imply “More AI running locally”.

I have found that many companies seem to exploit this “news rush”, knowing that most AI reporters never read the actual content before posting. One such example is Cursor that just launched “self-hosted cloud agents that keep your code and tool execution entirely in your own network”. Except that they don’t. All model inference, orchestration, task planning, and the Cursor UI/dashboard, all run inside the Cursor cloud in the US. But most newsletters wrote about this news presenting it like companies could now deploy a private AI agent in their own cloud.

It’s almost like many newsletters and news reporters have forgotten why they write about AI in the first place. What started as a passion maybe ended up as a chore, and in the era of vibe coding it’s tempting to automate things as much as possible. I still write Tech Insights by hand, and for me it’s all about reflection: What is my own opinion of each news item, and how do I believe it will affect the companies I work with? The only way to find out is to actually read the full news and related articles, and then write a summary by hand. AI can automate a lot of things, but it still cannot rewire the neurons in my head for me.

Thank you for being a Tech Insights subscriber!

Listen to Tech Insights on Spotify: Tech Insights 2026 Week 14 on Spotify

Notable model releases last week:

- Mixtral Voxtral TTS. Mistral’s first text-to-speech model. 4B parameters, 9 languages.

THIS WEEK’S NEWS:

- Google Releases Gemini 3.1 Flash Live for Real-Time Voice Agents

- Google TurboQuant Compresses LLM Key-Value Cache by 6x

- ARC Prize Launches ARC-AGI-3 Benchmark

- AI Literacy Drives CEO Succession at Coca-Cola and Walmart

- Google Lyria 3 Pro Extends AI Music Generation to Full-Length Tracks

- Cursor Launches Self-Hosted Cloud Agents

- OpenAI Shuts Down Sora and Cancels $1 Billion Disney Deal

- WeChat Integrates OpenClaw AI Agent via ClawBot

- Anthropic Publishes Multi-Agent Harness Design for Long-Running Coding Tasks

Google Releases Gemini 3.1 Flash Live for Real-Time Voice Agents

https://blog.google/innovation-and-ai/technology/developers-tools/build-with-gemini-3-1-flash-live

The News:

- Google launched Gemini 3.1 Flash Live on March 26 as a developer-facing model available in preview via the Gemini Live API in Google AI Studio, targeted at building real-time voice and vision agents.

- The model scores 90.8% on ComplexFuncBench Audio, a multi-step function calling benchmark with complex constraints, compared to lower scores on the previous 2.5 Flash Native Audio model.

- On Scale AI’s Audio MultiChallenge, which tests instruction-following and long-horizon reasoning under real-world interruptions, the model scores 36.1% with “thinking” enabled.

- The model supports over 90 languages for real-time multimodal conversations and includes SynthID watermarking interwoven into all audio output to signal AI-generated content.

- Real-world integrations include design tool Stitch, which uses the API to let users critique and build UI variations via voice, and Ato, an AI companion device for older adults using the multilingual capabilities for daily conversation.

My take: If you want to achieve sub-second voice interaction with an AI model you cannot use speech-to-text to first translate everything into words. You need a speech-to-speech model (S2S). OpenAI has their Realtime API, NVIDIA has PersonaPlex, and now Google has Gemini 3.1 Flash Live. S2S models are interesting in that they process raw audio natively without a separate transcription step, which means having an interaction with them feels quite similar to talking to a real person, at least when it comes to latency.

The golden standard for round-trip latency for AI models is below 300 milliseconds, which is around what you end up with on a phone call where both participants are using Bluetooth headsets. Google hasn’t published any specifications for Gemini 3.1 Flash Live, but if it’s close to or below 300 milliseconds it could start to become very difficult to know if you are talking to a machine over the phone. I believe we are very close to having digital phone agents that are impossible to identify as AI callers, for both markering and support.

Read more:

- Gemini 3.1 Flash Live is here! : r/Bard

- The debut of Gemini 3.1 Flash Live could make it harder to know if you’re talking to a robot – Ars Technica

Google TurboQuant Compresses LLM Key-Value Cache by 6x

https://research.google/blog/turboquant-redefining-ai-efficiency-with-extreme-compression

The News:

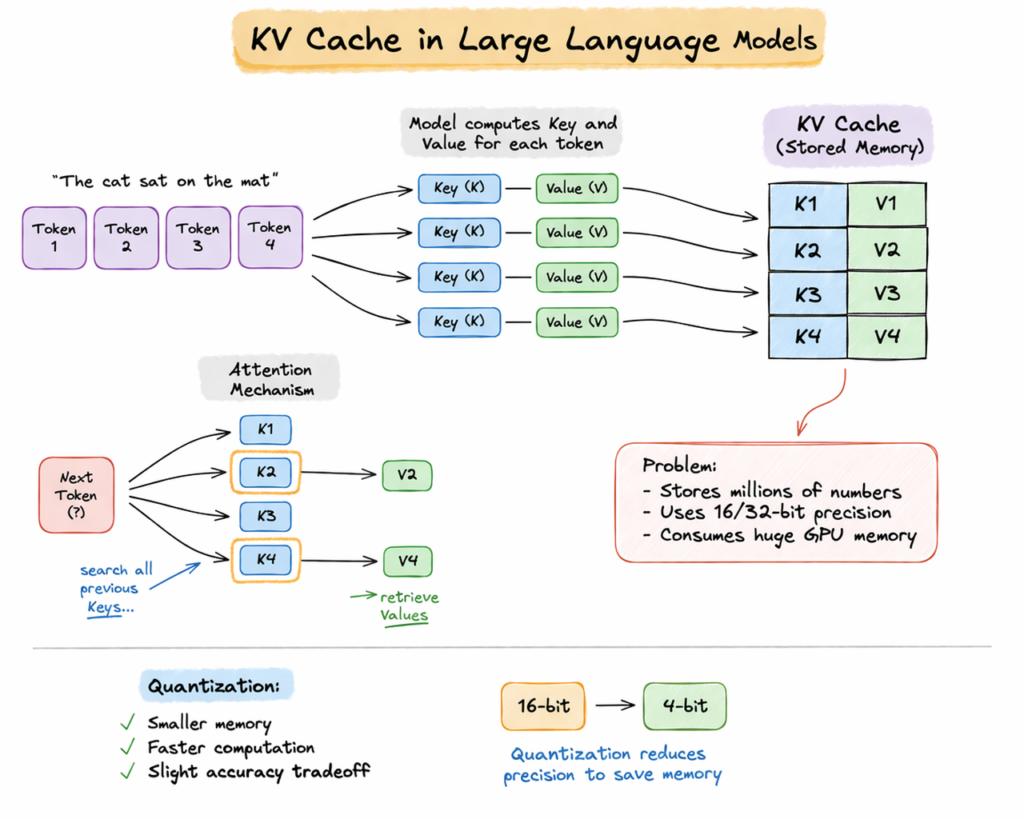

- Google Research published TurboQuant, a vector quantization algorithm that compresses the key-value (KV) cache in large language models to 3 bits per value, reducing memory footprint by at least 6x with no measurable accuracy loss and no model retraining required.

- TurboQuant combines two component algorithms: PolarQuant converts Cartesian data vectors into polar coordinates, eliminating per-block normalization overhead that traditional methods must store; QJL then applies a Johnson-Lindenstrauss transform to the residual error, reducing it to a single sign bit per dimension with zero additional memory overhead.

- At 4-bit precision on NVIDIA H100 GPUs, TurboQuant delivers up to 8x speedup in computing attention logits compared to the 32-bit uncompressed baseline.

- On needle-in-a-haystack retrieval tests across contexts up to 104,000 tokens, TurboQuant achieved 100% recall while maintaining 6x memory reduction.

- The algorithm is data-oblivious, meaning it does not require dataset-specific tuning or large precomputed codebooks, unlike competing approaches such as Product Quantization (PQ) and RabbiQ.

- The paper will be presented at ICLR 2026; Google notes TurboQuant also applies to vector search, with direct relevance to its Search and advertising infrastructure.

My take: There have been so many misunderstandings about this news the past week. So let me clear some things up:

- No, TurboQuant does not mean AI models will be 6x smaller so you can run them on cheaper hardware

- No, TurboQuant does not mean AI models will run 8x faster

TurboQuant compresses the KV cache of the models, not the model weights. The KV cache is the memory that grows as a conversation gets longer, storing previously processed tokens. All model weights, which determine how large a model is in the first place, are untouched.

A 6x reduction in KV cache memory means cloud providers can serve longer context windows with fewer H100s, or handle more concurrent users on the same GPU cluster. It does not mean a 16GB Mac Mini suddenly runs larger models. The model itself is the same size. The weights are unchanged. The real impact is on inference economics at scale, not local deployment.

The companies that are negatively affected by this news are all inference optimization startup companies, whose entire margin was built on solving exactly this problem.

Read more:

- Null Hype on X: “Clarifying what TurboQuant actually does” / X

- Google’s TurboQuant Explained: How They Cut LLM Memory by 6x Without Losing Accuracy | by Divy Yadav | Mar, 2026 | Towards AI

ARC Prize Launches ARC-AGI-3 Benchmark

https://arcprize.org/arc-agi/3

The News:

- The ARC Prize Foundation launched ARC-AGI-3 on March 25, a benchmark that shifts from static pattern puzzles to interactive, game-like environments where agents must explore, form goals, and plan multi-step actions without any instructions.

- Unlike ARC-AGI-1 and ARC-AGI-2, which presented agents with a grid to analyze and complete, ARC-AGI-3 places agents inside dynamic environments they have never seen, requiring them to build a world model from raw observations and adjust behavior as new evidence appears.

- Every frontier model currently scores below 1% on the benchmark: Google Gemini Pro leads at 0.37%, followed by GPT-5.4 High at 0.26%, Anthropic Opus at 0.25%, and Grok at 0%. Humans solve 100% of the same environments.

- On the Kaggle leaderboard, the top submission as of launch week scores 0.25, just about twice the 0.12 random-agent baseline, with the $700,000 grand prize still unclaimed.

- Scoring is based on skill-acquisition efficiency: agents are measured against the second-best human action count, penalizing unnecessary steps rather than just checking whether tasks are completed.

- The competition runs through November 2, 2026, with two milestone prize pools of $75,000 total, due June 30 and September 30.

My take: The previous AI benchmark ARC-AGI-2 focused on solving logical puzzles, and all the latest models like Opus 4.6, Gemini 3.1 Pro and GPT-5.4 exceeded human capabilities for that test. ARC-AGI-3 pushes things to the next level by making AI models solve game-like puzzles in environments they have never seen before. All puzzles must also be solved in an optimal way, meaning the AI needs to consider all possible paths before starting with the first move.

This test is not something models have specifically been trained on, but it is a skill that is highly valuable when working with computer tasks in environments they have not been specifically trained at. We just got our first AI model that’s really good at working at a computer (GPT-5.4) and I think we will see massive improvements in both computer use and in ARC-AGI-3 before 2026 is over. Currently all frontier level models score below 1% on this benchmark, but I estimate we should be seeing beyond-human-capabilities on it in around a year. The main reason for them to fail so bad is that they must solve the puzzles in ARC-AGI-3 in an optimal way, which is one of the main critiques against it.

AI Literacy Drives CEO Succession at Coca-Cola and Walmart

The News:

- Coca-Cola CEO James Quincey and former Walmart CEO Douglas McMillon both cited AI-driven transformation as a factor in their decisions to step down, pointing to a shift in the skills required at the top of large enterprises.

- Quincey, CEO since 2017, will hand over to current COO Henrique Braun on March 31. He told CNBC: “In a pre-AI, a pre-gen-AI mode, we made a lot of progress. But now there’s a huge new shift coming along.”

- Quincey framed the decision as identifying the right successor rather than a performance issue. Coca-Cola posted $47.9 billion in revenue for its most recent full year, up from $35.4 billion in the 2017 fiscal year.

- McMillon, Walmart CEO since 2014, retired in February 2026 and was succeeded by John Furner, former head of Walmart U.S. McMillon told CNBC that with AI, he “could start big transformations, but couldn’t finish.”

- Walmart has deployed AI across supply chain optimization, customer-facing agents, and shopping experiences, and recently partnered with OpenAI to let shoppers make purchases through ChatGPT.

My take: Both Quincey and McMillon depart among very strong company results, positioning the handoff explicitly as a capability pivot toward leaders more suited to scaling AI systems.

AI has enormous possibilities for reducing costs and boosting efficiency. But the work needs to be controlled from the top. This means that you as a company leader must prepare yourself and your company for one of the largest technological shifts in modern age. You cannot lean back and let your middle managers run it, that way you will just end up with a myriad of individually designed AI solutions where the ones with the strongest opinions gets the most resources.

I am not totally sure Quincey and McMillon departed because they do not understand AI. It might be that they departed because they both are in their primes (age 59 and 61), they have made enough money (Quincey is worth around $166 million, McMillion has a net worth of around $633 million), and they do not want to push themselves and their organizations through this intense period that is about to come. Every organization will have to transform.

Google Lyria 3 Pro Extends AI Music Generation to Full-Length Tracks

https://blog.google/innovation-and-ai/technology/ai/lyria-3-pro

The News:

- Google released Lyria 3 Pro on March 25, one month after Lyria 3, raising the output limit from 30 seconds to 3 minutes and adding structural control over song composition.

- Users can prompt for specific song sections including intros, verses, choruses, and bridges, rather than describing only the overall track sound.

- Lyria 3 Pro is available in Vertex AI (public preview for enterprise), Google AI Studio, the Gemini API, Google Vids, the Gemini app (paid subscribers), and ProducerAI.

- All outputs are embedded with SynthID, Google’s imperceptible audio watermark, and the model does not reproduce named artists’ styles directly; named artists are treated as broad stylistic inspiration only.

- Grammy-winning producer Yung Spielburg used Lyria in the composition of the score for the Google DeepMind short film “Dear Upstairs Neighbors,” and DJ François K used the model in an iterative process for a forthcoming release.

- A companion variant, Lyria 3 Clip, is available for shorter outputs optimized for rapid iteration and social content.

My take: The general consensus among the community seems to be that Lyria 3 sounds better than Suno, but Suno writes better songs than Lyria. Suno is better at vocals including realism, melodic choices and flow, and is also by many regarded as the better composer. If you produce music with AI you are probably already using both of them, and now the choice seem to be to use Lyria 3 for things like ambient, cinematic and background music, where Suno is more fitting for melodic creativity and vocal performance.

Read more:

- Lyria 3 Pro vs Suno: 5x Pricier, But Does It Sound Better? | FindSkill.ai — Master Any Skill with AI

- Suno vs Lyria : r/SunoAI

Cursor Launches Self-Hosted Cloud Agents

https://cursor.com/blog/self-hosted-cloud-agents

The News:

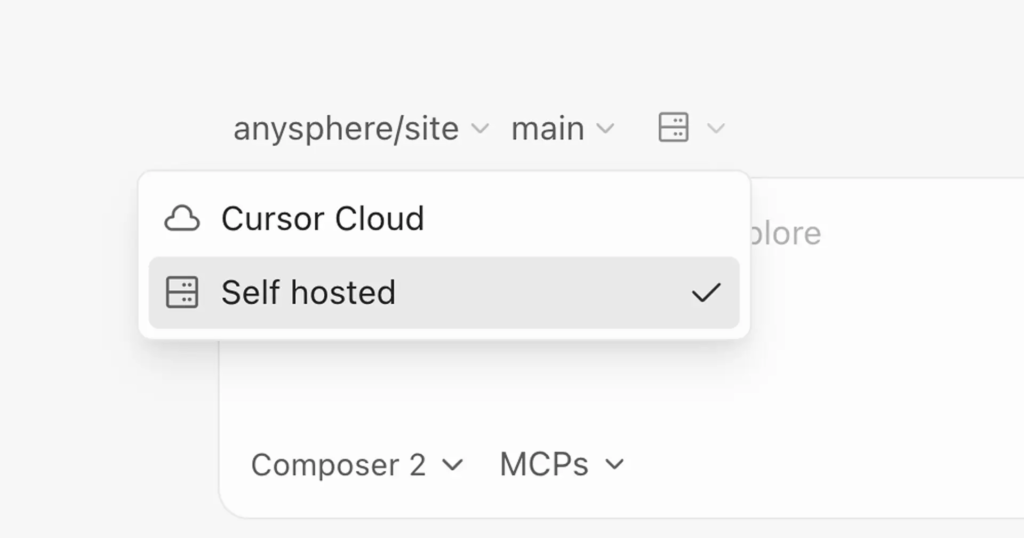

- Cursor has made self-hosted cloud agents generally available, letting enterprise teams run autonomous coding agents entirely within their own network infrastructure, with code, secrets, and build artifacts never leaving the internal environment.

- Each agent runs in an isolated virtual machine with a terminal, browser, and full desktop; it clones repositories, sets up environments, writes and tests code, and pushes pull requests without requiring a connected local machine.

- Workers connect outbound via HTTPS to Cursor’s cloud with no inbound ports, firewall changes, or VPN tunnels required, and are started with a single command: agent worker start.

- For large-scale deployments, Cursor provides a Helm chart and Kubernetes operator; teams define a WorkerDeployment resource and a controller handles scaling, rolling updates, and lifecycle management automatically.

- Agents support multiple models including Composer 2 and frontier models, and can be extended with plugins, MCPs, subagents, rules, and hooks.

“Cursor now supports self-hosted cloud agents that keep your code and tool execution entirely in your own network.”

My take: Last week Cursor re-packaged Kimi 2.5 as “Composer 2”, and this week they are launching AI agents that run “entirely in your own network”. But is this really the case? Not really.

What Cursor launched are AI agents that run as software in your infrastructure, but the foundation model Composer 2 still runs inside the Cursor infrastructure, in the US. What Cursor calls “self-hosted” refers only to where the worker executes tool calls, not where the model runs. In fact all model inference, orchestration, task planning, and the Cursor UI/dashboard, all of this runs inside the Cursor cloud. A better description would be “self-hosted execution environment” but for a company pushing for their next investment round “self-hosted agents” sounds much better.

OpenAI Shuts Down Sora and Cancels $1 Billion Disney Deal

https://variety.com/2026/digital/news/openai-shutting-down-sora-video-disney-1236698277

The News:

- OpenAI announced on March 24 that it is discontinuing Sora, its AI video generation platform launched in late 2024, shutting down both the consumer app, the professional web service, and the related API.

- The shutdown also terminates a deal signed in December 2025 with Disney, which had agreed to license characters from Marvel, Pixar, and Star Wars for use on the platform and take a $1 billion stake in OpenAI via stock warrants. No money changed hands before the deal was dissolved.

- OpenAI cited high compute costs as a central reason. Reports suggest Sora was costing approximately $15 million per day in inference costs, with a 30-day user retention rate below 8% among Pro subscribers paying $200 per month.

- The Sora team will redirect resources toward “world simulation research” to support robotics development. OpenAI plans to use video generation technology internally to train robots on real-world physical tasks.

- OpenAI has completed initial development of a new model internally codenamed “Spud,” with CEO Sam Altman indicating a release window of mid-to-late April 2026. The model is expected to integrate into ChatGPT and the OpenAI API rather than ship as a standalone app.

My take: This Saturday Tibo at OpenAI posted on X: “Growth of codex continues to outpace our prediction and we almost ran out of capacity three days in a row”. OpenAI makes $200 from every developer using Codex, and people are using it so much that OpenAI is running out of GPUs to run it. Based on that alone, the decision to stop improving Sora in tough competition with companies like Google and Runway was probably an easy one. I never really liked Sora for video production and never got anything really good out from it, and I know of very few people who actually used it for any real production.

WeChat Integrates OpenClaw AI Agent via ClawBot

The News:

- Tencent launched ClawBot on March 22, a plugin that embeds the open-source OpenClaw AI agent as a native contact inside WeChat, giving the agent direct access to over 1 billion monthly active users.

- Users interact with OpenClaw through standard WeChat chat commands, directing it to complete tasks such as transferring files, sending emails, analyzing data, and making reservations without switching apps.

- ClawBot is part of a broader agent suite Tencent introduced in early March: QClaw targets individual users, Lighthouse serves developers, and WorkBuddy covers enterprise workflows with over 20 skill packages including invoice processing, report generation, and data tasks.

- OpenClaw, originally created by Austrian programmer Peter Steinberger (acquired by OpenAI in February 2026), is an open-source framework that runs locally on user devices and supports a wide range of messaging platforms including Telegram, Slack, WhatsApp, and now WeChat.

- Tencent spent 18 billion yuan (~$2.5 billion USD) on AI in 2025 and has pledged to more than double that figure in 2026.

My take: Tencent did not integrated OpenClaw inside WeChat, what they did is add a plugin called ClawBot which allows users to interact with your own OpenClaw instances. You still need OpenClaw running in your own server – either as a local machine or using a cloud instance. Then on your server install the Tencent OpenClaw CLI tool called @tencent-weixin to get started. For the billion or so people using WeChat every day this is probably the best way to access their own OpenClaw server.

Anthropic Publishes Multi-Agent Harness Design for Long-Running Coding Tasks

https://www.anthropic.com/engineering/harness-design-long-running-apps

The News:

- Anthropic’s Labs team member Prithvi Rajasekaran documented a three-agent architecture (planner, generator, evaluator) built to autonomously produce full-stack applications over multi-hour sessions, addressing two known failure modes in solo agents: context window degradation and self-evaluation bias.

- The evaluator agent uses the Playwright MCP to interact with the live running application, navigating pages, testing UI features, API endpoints, and database states before scoring outputs against defined criteria.

- In a direct comparison for a retro game maker, a solo agent run cost $9 over 20 minutes and produced a broken game where entities did not respond to input. The three-agent harness ran for 6 hours at $200 and produced a playable game with a 16-feature spec, sprite animation, sound, and an integrated Claude agent for level generation.

- The updated harness, tested with Claude Opus 4.6, built a browser-based DAW (Digital Audio Workstation) using the Web Audio API in 3 hours and 50 minutes at $124.70. The generator ran coherently for over two hours without the sprint decomposition that Opus 4.5 required.

- Agent-to-agent communication is handled via files: one agent writes a file, the other reads and responds, either in the same file or a new one. This keeps shared state explicit without requiring direct inter-agent messaging.

My take: As is now typical with Anthropic, they only measure if their models can produce working output, and never measure the actual quality of the code written to show the output. In the article, the only places Prithvi even mentions the word “quality” it is with focus on the visual quality of the output.

If you care nothing about coding standards and beautifully structured code, then please go ahead and use whatever harness you want like this one that can produce visually working prototypes at record speed. But if you are working on anything even close to a production system, maybe slow down a bit and give GPT-5.4-high a good try before committing to something like this.